This guide provides strategies to manage experiments that include users with multiple user sessions interacting with your app. Over the course of multiple sessions, users might encounter different variations of an experiment, which can lead to an inconsistent user experience. By leveraging different randomization units and attribute targeting, you can enforce consistency when it makes sense for your user base.

The second half of this guide includes an example of an experiment you can build that uses these strategies. This example compares two variations of an e-commerce site. The experiment will compare the performance of a one-click checkout experience versus a two-click checkout experience, tracking time to check out.

When conducting an experiment on an app or website with both logged-out and logged-in states, you may want the end user to receive the same variation every time they log in. You can do this by setting an experiment’s randomization unit to user.

However, if you optimize for consistency across logins instead of continuity within a session, a user might see one variation while logged out and another immediately after they log in. To keep the experience stable for the length of a visit, use session as the randomization unit instead. The following sections compare the two approaches.

As an example, imagine Anna visits your website on her phone before she logs in. She sees variation A, the one-click checkout option. She adds some items to her cart, remembers to log in, continues to see variation A, adds a few more items, then gets interrupted. She decides to finish checking out later.

That evening, Anna visits your website from her laptop. She is not logged in and she is on a different device, so your app does not recognize her as the same person from earlier in the day. The experiment serves variation B, the two-click checkout option. When Anna logs in, if logged-in users always receive variation A, her variation flips from B to A in the middle of her session.

With user as the randomization unit, logged-in people see the same variation whenever they return to your app. We recommend user when you care most about consistency for an individual over time. Assignments are based on the user’s unique context key. The trade-off is that someone like Anna can still see two different versions of your app within one session.

Contrast this with Miguel’s experience. Miguel visits your website on his phone and does not log in. He sees variation A, the one-click checkout option. He adds some items to his cart, remembers to log in, continues to see variation A, adds a few more items, then gets interrupted. He decides to finish checking out later.

That evening, Miguel visits your website from his laptop. He is not logged in yet and he is on a different device, so the experiment does not recognize him as the same person from earlier in the day. The experiment serves variation B, the two-click checkout option. Miguel logs in and checks his cart. He keeps seeing variation B until the session ends.

That behavior is what you should expect with session as the randomization unit. Assignment depends on a session key, usually stored in a cookie that expires after about 7–14 days. If someone returns on the same device and browser before the cookie expires and has not cleared cookies, they usually see the same variation. However, different devices, different browsers, or incognito mode each get a new session key, so you cannot rely on the same variation across those contexts.

We recommend session when you want consistency within a single visit or interaction. The trade-off is that someone like Miguel might see different versions of your app on different logins or devices.

These approaches help keep the product experience coherent when people move between sessions, devices, and authentication states.

If the feature is available to both logged-out and logged-in users, consider scoping the experiment to logged-in users only and randomizing by user. Your experiment will include fewer people, but variation assignments will stay consistent.

People often use both a phone and a laptop to access the same website or app. After a device switch, LaunchDarkly usually cannot tell that two sessions belong to the same person. Randomizing by user while restricting the experiment audience to one device type, such as mobile, reduces how often someone hits two variations. Device-specific experiments can also be easier to interpret because behavior differs by platform. The example in this guide targets by device type.

Longer run times mean more opportunities for multiple session exposures. Shorter experiments shrink the window in which cross-session or cross-device inconsistency can occur.

This example builds a checkout experiment in an app and uses time to checkout as the primary metric. The following sections cover the metric, flag variations, hypothesis, randomization unit, and sample size.

First, create the metric you need for the experiment. This example uses a custom numeric metric.

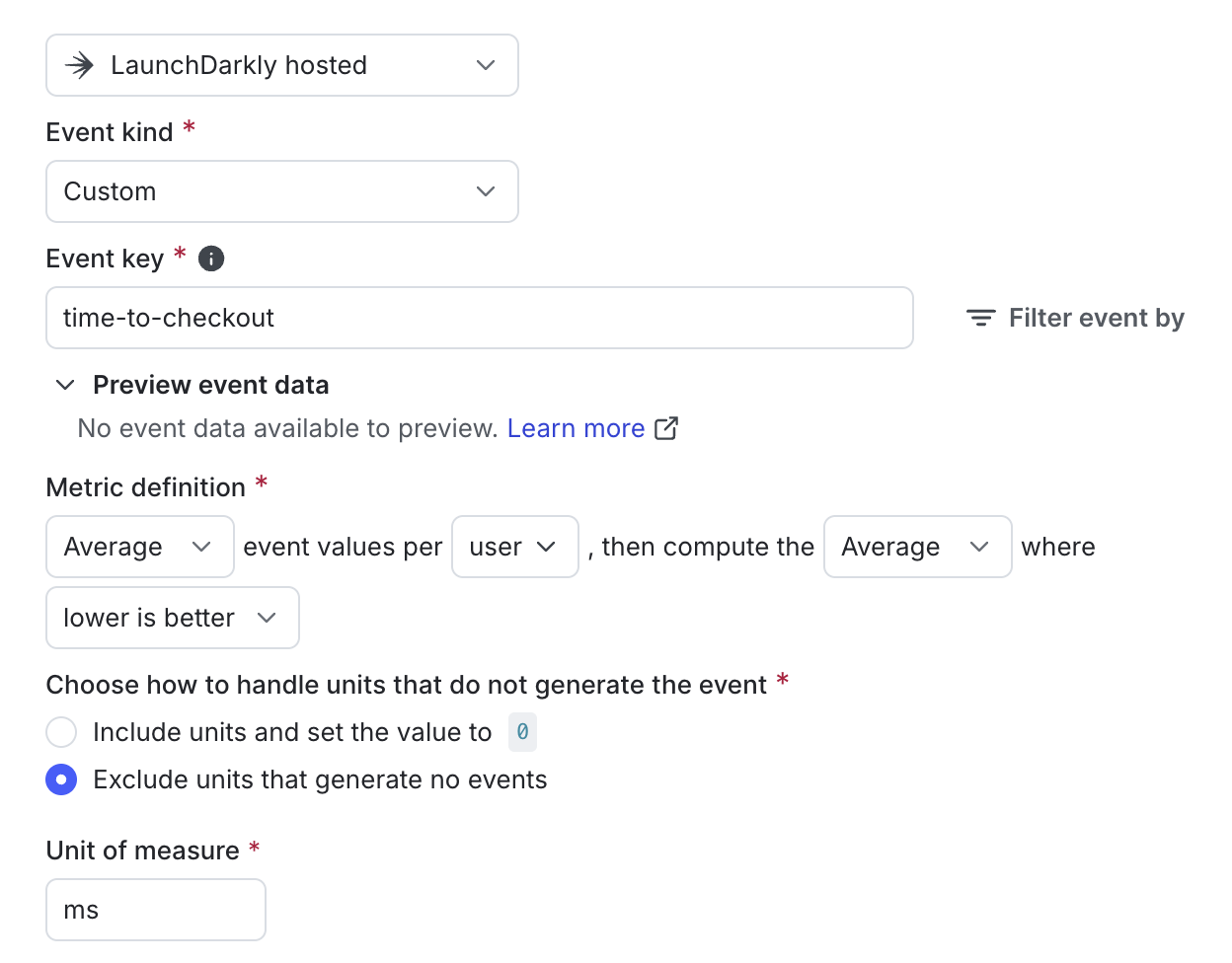

Use the following settings for the metric:

Average event values per User, then compute the Average of those values where lower is betterHere is an example of this metric:

When you create the metric, enter the appropriate event key from your codebase. In this example, the event key is time-to-checkout.

Sending custom events to LaunchDarkly requires a unique event key. You can set the event key to anything you want. Adding this event key to your codebase lets your SDK track actions customers take in your app as events. To learn more, read Sending custom events.

LaunchDarkly also automatically generates a metric key when you create a metric. You only use the metric key to identify the metric in API calls. To learn more, read Creating and managing metrics.

Next, create a “Check-out experience” boolean flag with these variations:

Here is what your flag’s variations will look like:

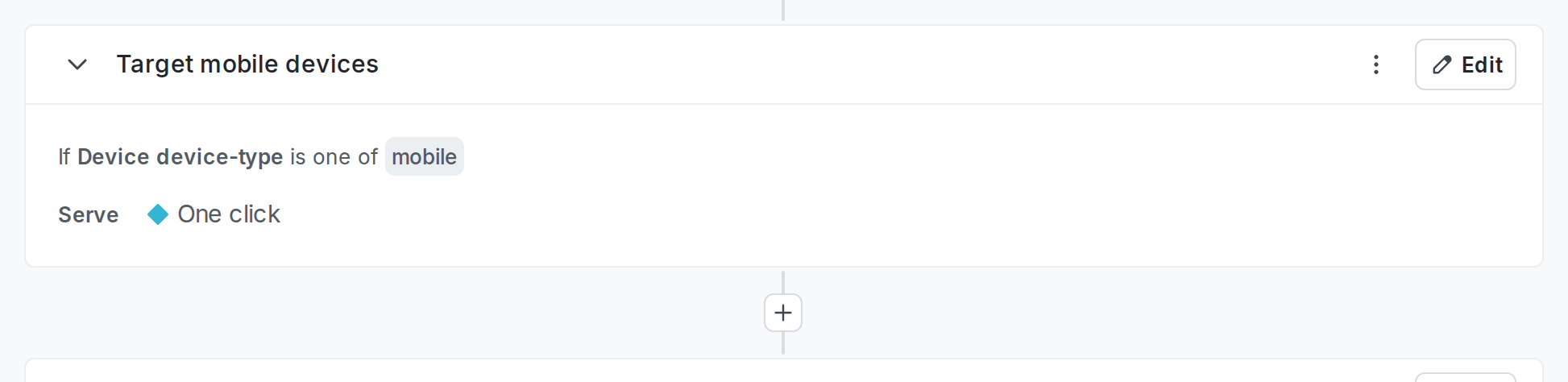

You could run the experiment on the flag’s default rule. In this case, however, it’s better to target only mobile devices to help with user experience consistency. To do this, add a flag rule that targets mobile devices. The exact rule will vary depending on what context attributes you send to LaunchDarkly, but the rule might look something like this:

To learn more, read Targeting rules.

To create the experiment, expand Iterate in the left sidebar, click Experiments, then click Create experiment.

Set up your experiment with the following parameters:

For detailed experiment creation instructions, read Creating experiments.

Now, you can toggle on your “Checkout experience” flag and begin your experiment. As soon as users begin interacting with your flagged code, results will begin flowing into the experiment’s Results tab. To learn more about reading experiment results, read Experiment results data.

For apps with logged-out and logged-in states, matching the same variation on every login is not always the right goal. Keeping one variation for the length of a session, including across the login moment, often feels smoother to users while still producing the experiment data you need to improve the product.

To get started building your own experiment, follow our Quickstart for Experimentation.

If you use an external warehouse such as BigQuery, Databricks, Redshift, or Snowflake, you can send your experiment data to an external database using a warehouse Data Export integration. By exporting your LaunchDarkly experiment data to the same warehouse as your other data, you can build custom reports your warehouse to answer product behavior questions. To learn more, read Warehouse Data Export.

You can also run experiments using warehouse native metrics. To learn more, read Creating experiments using warehouse native metrics.