*This article was originally published in Software Business Growth.

As a former teacher, I firmly believe we should always be learning. Every night at dinner we talk about what we learned that day. Learning something new can be intimidating and a huge time commitment, but it doesn't have to be. Running small experiments is an effective way to learn and try out new ideas.

The websites or apps we create and use are constantly changing, chances are these changes weren't random. It is highly likely that many of these changes came about as the result of an experiment. Google has been known to test 41 different shades of blue for their links to identify the best performing color (which may be a tad excessive).

Experiments lead to new knowledge, discoveries, and changes in behavior. We use experiments to gather information necessary to validate what we think or feel is the right thing to do with a feature or design element.

It's not a matter of either trusting our intuition or running endless experiments. A mixture of both is needed. We use our intuition to form a working hypothesis, and to question the results if they don't make sense. Instead of trusting our gut, experiments use metrics and data to validate our beliefs.

Experiment with JavaScript

You have a site that requires users to fill out a form to sign up for a service. The number of people abandoning the form before submission is increasing. Worried by this trend, you hold a brainstorming session to determine how to increase the number of form submissions.

Changing the information requested on the form is not an option so the team comes up with a potential solution that could be coded via JavaScript: a progress bar showing how much of the application remains.

Each of these options poses a number of other questions:

- How often should the progress bar be updated?

- Should the percentage complete be displayed with the progress bar?

- Is it better if the progress bar is a line or a circle?

- Where is the best place to display the message?

Instead of guessing, running short experiments can provide answers to these questions. Humans are notoriously bad at guessing, and we can be irrational in part due to our brains and biases. Experiments provide data to increase confidence that changes to the form will result in an increase in submissions.

How to run an experiment

The tl;dr on running an experiment:

- Form a hypothesis

- Determine the variations

- Segment your users

- Run the experiment

- Analyze the results

Form a hypothesis

A hypothesis is a question you want to answer. In this case, we want to know “What is the best look and feel for a progress bar to increase form completions?”. This question only addresses some of the questions. Other experiments will need to be run to determine the best place to display the message and the frequency of updates.

Once the question is established, the next step is establishing well-defined goals. Goals should include concrete statistics. For example, “Form completions will increase by 10 percent.” This is better than saying “Form completions will increase.”

Determine the variations

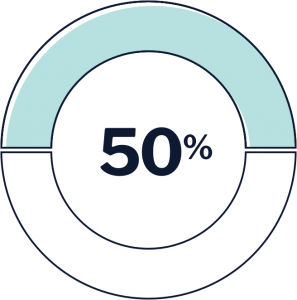

Variations are the number of different versions of a feature or element to test. You need a minimum of two groups: a control group and a variation, or two variations if this is a brand-new feature being tested. You're not limited to two groups, do what makes sense and is manageable. For this experiment, it makes sense to test four variations to answer our hypothesis.

Segment your users

Segmenting users is the process of dividing them into groups based on shared characteristics. Potential ways to segment users include:

- By location or geography

- First time vs. returning user

- Desktop vs. mobile

- Logged in vs. anonymous user

There are a number of commercial and open-source tools available to help you segment users and run experiments including LaunchDarkly and Convert.

When segmenting users you want to make sure the cohort sizes are balanced. Having one cohort larger than another will skew the results. For this example, you can use a round-robin or random assignment.

Run your experiment

Now for the fun part, kicking off the experiment. We can run either an A/B/N test, where the experiment will run until statistical significance of one variation is received, or a multi-armed bandit test, where algorithms will increase traffic allocations to better-performing variations until statistical significance is reached.

To learn more about these techniques check out these articles:

- A/B Testing with the Multi-Armed Bandit Approach: What It Is and Why You Should Be Using It

- Beyond A/B Testing: Multi-Armed Bandit Experiments

- A/B Testing Mastery: From Beginner to Pro in a Blog Post

- Multivariate Testing - Best Practices & Tools for MVT (A/B/N) Tests

Analyze the results

Once the experiment completes, you should analyze the results to see if any of the variations provided the desired increase in form submissions and roll that version out to all users.

It doesn't take long to run an experiment to determine which variation of a JavaScript progress bar to deploy, with the added benefit of basing the decision on data and not a gut feeling.

Here are some other JavaScript-related hypotheses you can answer with experiments:

- What is the optimal setTimeout value to display an alert after a period of inactivity?

- Which date picker works best on a mobile device?

- How often should the activity feed be updated?

If you're like me and enjoy learning, you can incorporate learning into the JavaScript you are writing through experimentation.

.png)