Getting started with Amazon Bedrock and AI Configs

Overview

This guide shows how to connect an Amazon Bedrock-powered application to LaunchDarkly AI Configs. Amazon Bedrock provides a single API for multiple foundation models with enterprise-grade AWS integration. By the end, you will be able to manage your model configuration and prompts outside of your application code, and track metrics automatically.

AI Configs support two modes:

- Completion mode returns messages and roles (system, user, assistant). Use it for chat-style interactions and message-oriented workflows. Completion mode supports online evaluations with judges attached in the LaunchDarkly UI.

- Agent mode returns a single

instructionsstring. Use it when your runtime or framework expects a goal/instructions input for a structured workflow. Agent mode changes the configuration shape from messages to instructions. Your application maps these instructions into your provider or framework’s native input.

Both modes support tool calling. This guide walks through completion mode as the main path, with an optional agent config section. To learn more about when to use each mode, read When to use prompt-based vs agent mode.

This guide provides examples in both Python and Node.js (TypeScript).

New to AI Configs?

If you’re a new user of AI Configs, start with the Quickstart and return to this guide when you are ready for a more detailed example.

To learn more about AI Configs-specific SDKs, read AI SDKs. For Python-specific details, read the Python AI SDK reference.

Prerequisites

To complete this guide, you need the following:

- A LaunchDarkly account with an SDK key for your environment and a member role that allows AI Config actions. To learn more about LaunchDarkly roles, read Roles.

- An AWS account with Amazon Bedrock access enabled and model access granted for the models you want to use. To learn more, read Model access in the AWS documentation.

- AWS credentials with the

bedrock-runtime:Conversepermission. - A development environment:

- Python: Python 3.10 or higher

- Node.js: Node.js 20 or higher

- Familiarity with LaunchDarkly contexts. To learn more, read Contexts and segments.

Concepts

Before you begin, review these key concepts.

AI Configs

An AI Config is a LaunchDarkly resource that controls how your application uses large language models. Each AI Config contains one or more variations. Each variation specifies:

- A model configuration, including the model name and parameters

- Messages that define the prompt

You can update these settings in LaunchDarkly at any time without changing your application code.

Contexts

A context represents the end user interacting with your application. LaunchDarkly uses context attributes to:

- Determine which variation to serve based on targeting rules

- Populate

{{ ldctx.* }}placeholders in your prompts with context attribute values

Other placeholders, such as {{ topic }}, are populated from the variables argument you pass at runtime.

The tracker

When you retrieve an AI Config, the SDK returns a config object with a tracker property. The tracker records metrics from your Bedrock calls, including:

- Generation count

- Input and output tokens

- Latency

- Success and error rates

These metrics appear on the AI Insights dashboard in LaunchDarkly.

Step 1: Install the SDK

Install the LaunchDarkly AI SDK and the AWS Bedrock SDK in your application. AI Configs are supported by LaunchDarkly server-side SDKs only. The Node.js examples in this guide use the server-side Node.js AI SDK.

Here is how to install the required packages:

Create a .env file in your project root to store your credentials:

Add .env to your .gitignore to keep credentials out of version control.

Step 2: Initialize the clients

Initialize both the LaunchDarkly client and the Amazon Bedrock runtime client. Store your credentials in environment variables.

Here is the initialization code:

Step 3: Create an AI Config in LaunchDarkly

Create an AI Config in the LaunchDarkly UI to store your Bedrock model settings and prompts.

Using the MCP server or agent skills

If you have the LaunchDarkly MCP server or agent skills configured, prompt your coding assistant to create the AI Config for you. For example:

“Create a completion mode AI Config called ‘Bedrock assistant’ with a ‘Claude Sonnet 4’ variation using the us.anthropic.claude-sonnet-4-20250514-v1:0 Bedrock model, temperature 0.7, max_tokens 1024, and the system message: ‘You are a helpful assistant. Answer questions about {{topic}}.’ Enable targeting.”

To create the AI Config:

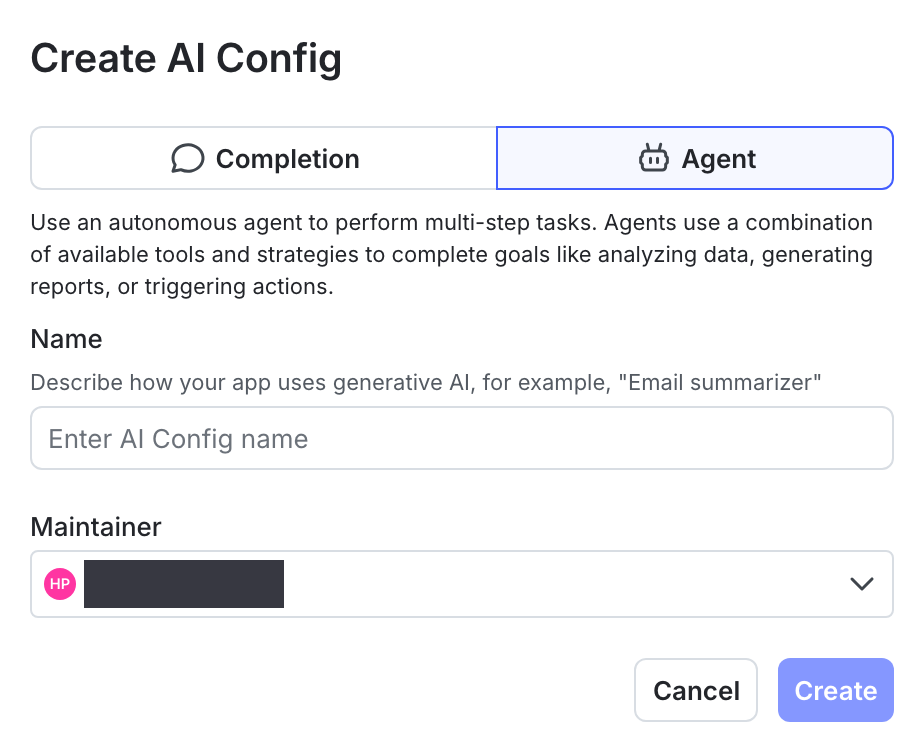

- In the left navigation, click Create and select AI Config.

- In the Create AI Config dialog, select Completion.

- Enter a name, such as “Bedrock assistant”.

- Click Create.

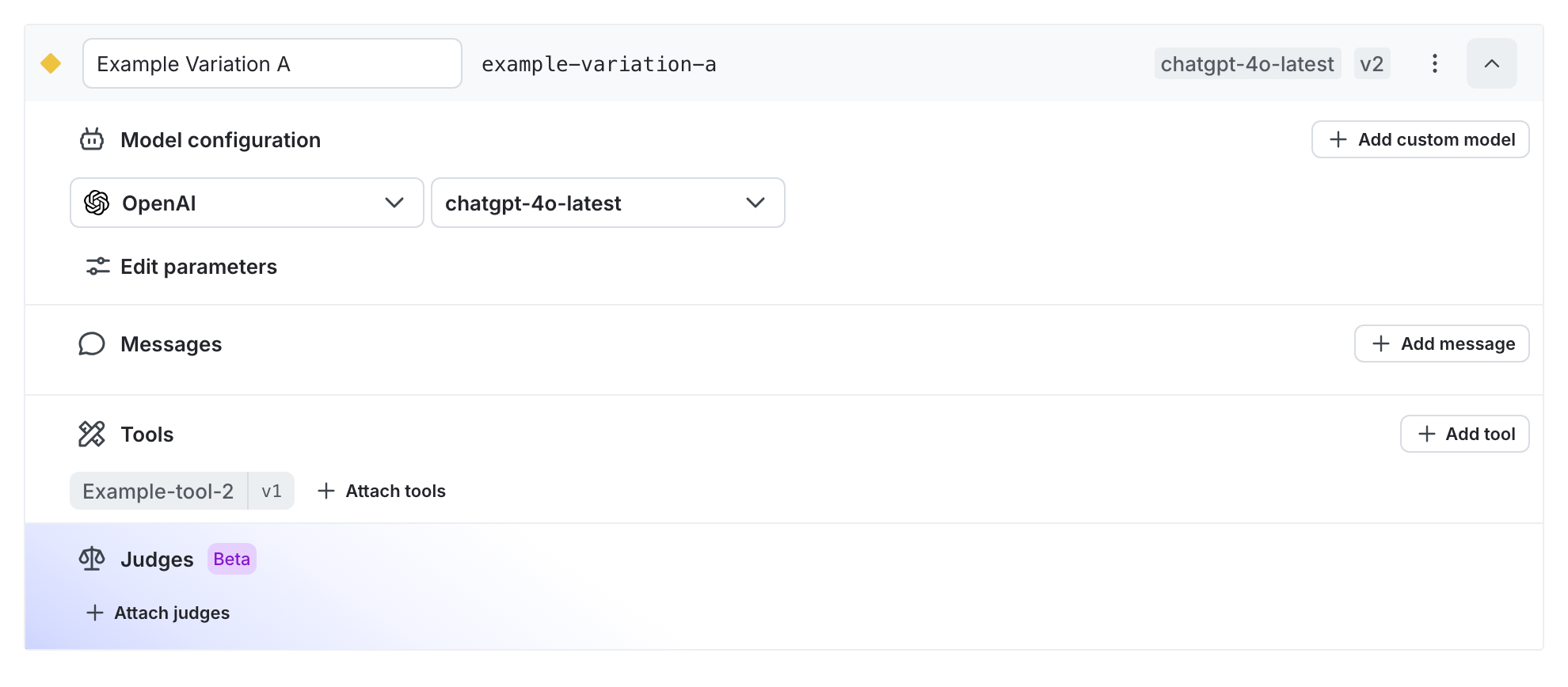

To create a variation:

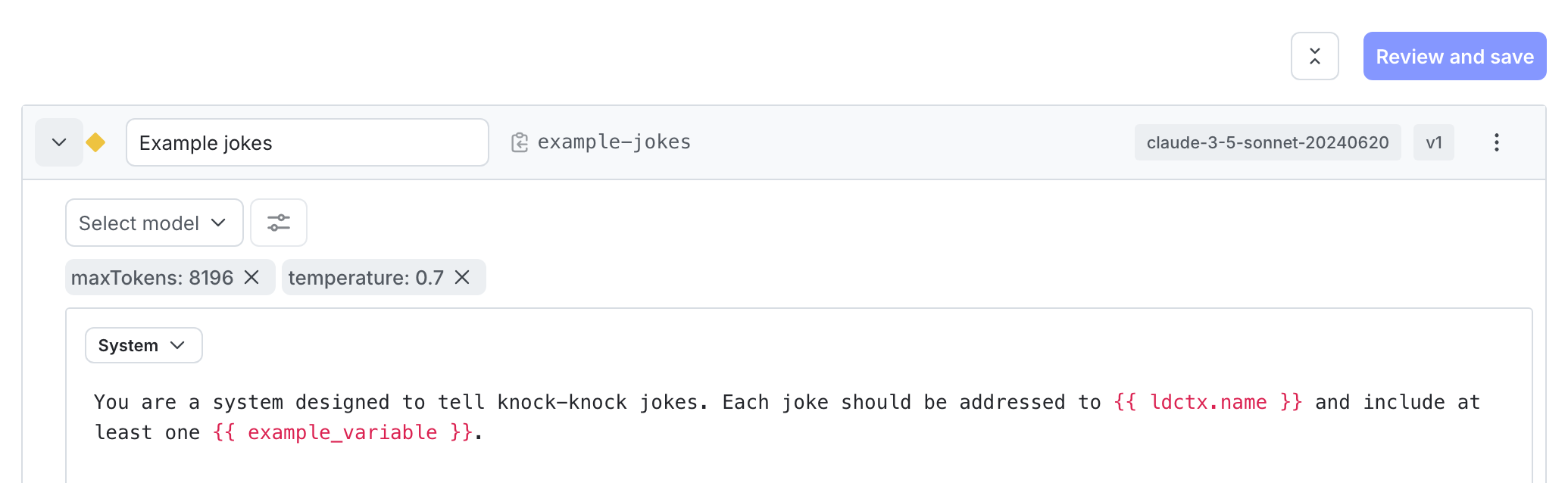

- On the Variations tab, replace “Untitled variation” with a name, such as “Claude Sonnet 4”.

- Click Select a model and choose an Amazon Bedrock model such as

us.anthropic.claude-sonnet-4-20250514-v1:0. - Click Parameters and set

temperatureto0.7andmax_tokensto1024. - Add a system message to define your assistant’s behavior:

- Click Review and save.

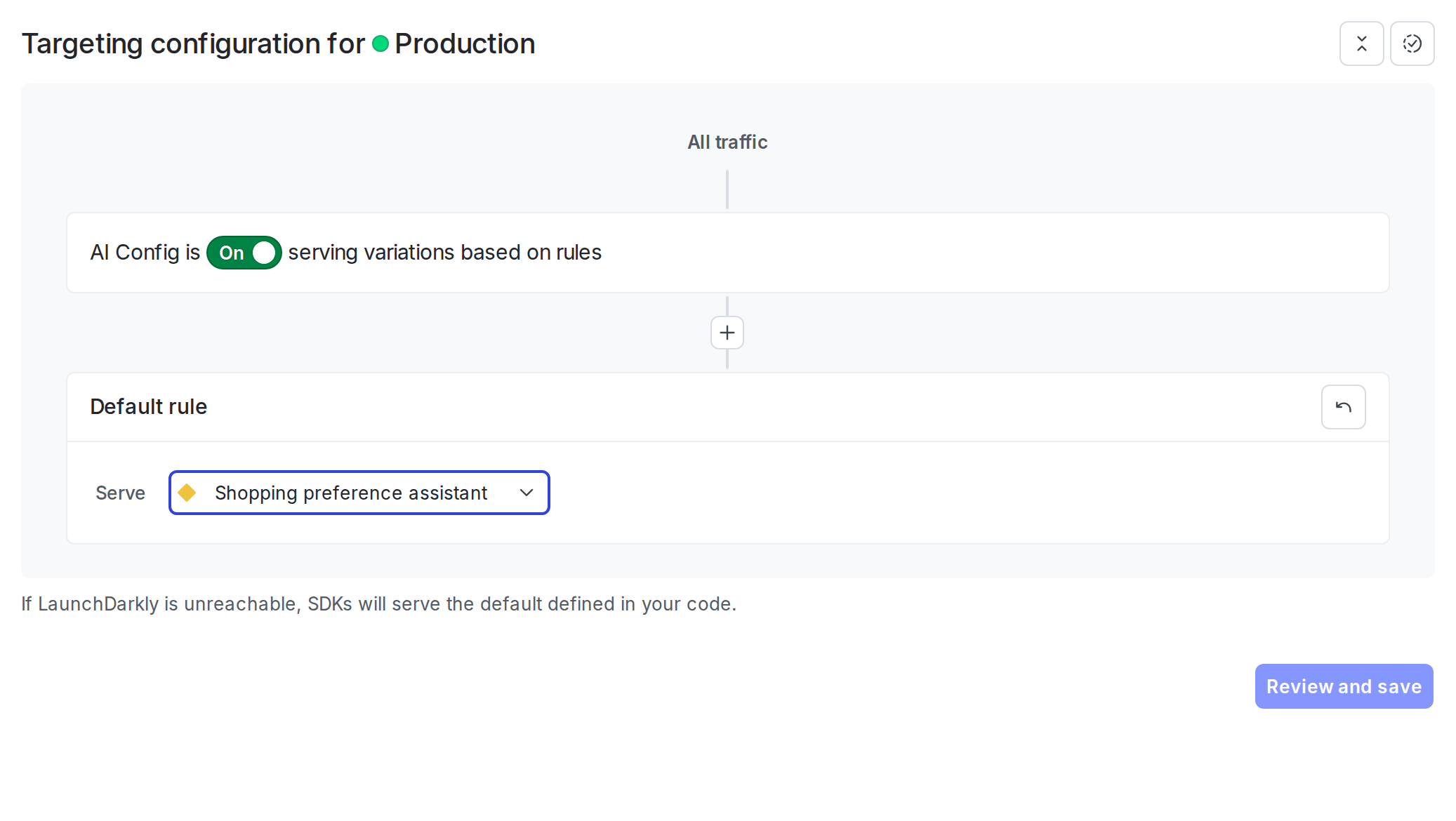

To enable targeting:

- Select the Targeting tab.

- In the “Default rule” section, click Edit.

- Set the default rule to serve your variation.

- Click Review and save.

Step 4: Get the AI Config in your application

Retrieve the AI Config from LaunchDarkly by calling the completion config function. Pass a context that represents the current user. You can also pass an optional fallback configuration that the SDK uses when LaunchDarkly is unreachable.

Here is how to get the AI Config:

The SDK uses the fallback configuration when LaunchDarkly is unreachable. Check the enabled property and handle the disabled case in your application.

Best practices

For production use:

- Retrieve the AI Config each time you generate content so LaunchDarkly can evaluate the latest targeting rules and prompt changes.

- Provide a fallback configuration when possible so your application can fail gracefully if LaunchDarkly is unavailable.

- Avoid sending personally identifiable information in contexts unless you have a specific need and an approved handling pattern. To learn more, read Privacy in AI Configs.

Step 5: Call Bedrock and track metrics

The Amazon Bedrock Converse API expects a specific format. System messages go in a top-level system list, user and assistant messages go in the messages array with nested content blocks, and model parameters go in inferenceConfig. The tracker’s track_bedrock_converse_metrics helper records latency, success, errors, and token usage directly from the Converse response.

Define a helper to filter AI Config parameters to Bedrock’s inferenceConfig shape. Bedrock’s Converse API accepts maxTokens, temperature, topP, and stopSequences. Rename LaunchDarkly’s max_tokens key to maxTokens; the rest already match.

Then call Bedrock with the config and pass the response to the tracker:

Step 6: Optional: Use agent mode with tool calling

Agent-mode AI Configs return a single instructions string instead of a message list, and they let you attach reusable tools from the LaunchDarkly tools library. With Bedrock’s Converse API, the instructions map to a system content block and tools pass through on the toolConfig parameter.

Create the tool in the tools library

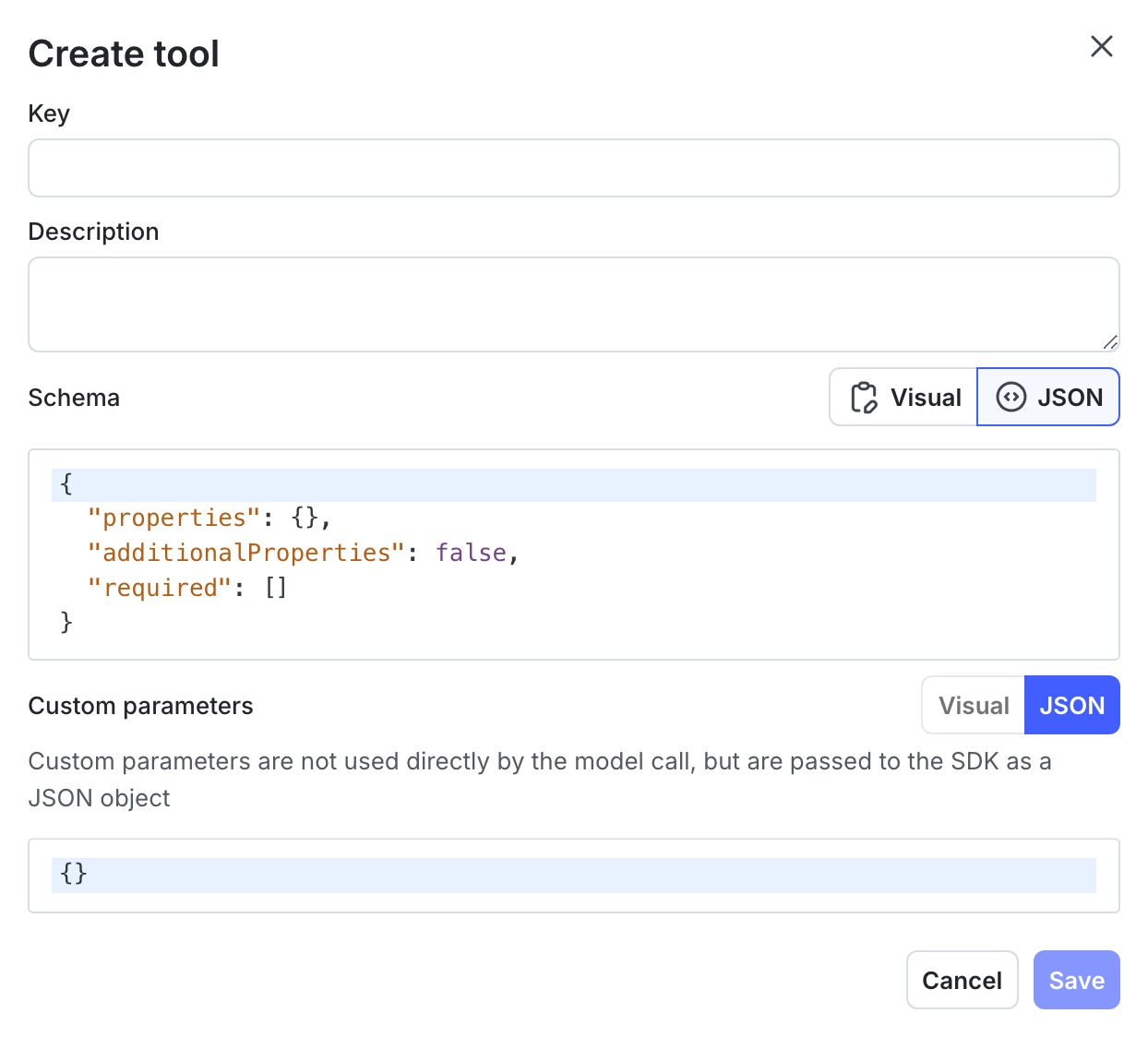

First, define the tool in LaunchDarkly so the AI Config variation can reference it:

- In the left navigation, click Library, then select the Tools tab.

- Click Add tool.

- Enter

get_order_statusas the Key. - Enter “Look up the status of a customer order by order ID” as the Description.

- Define the schema using the JSON editor:

- Click Save.

Create the agent AI Config

- Click Create and select AI Config.

- Select Agent mode.

- Enter a name:

- Click Create.

- On the Variations tab, name the variation (for example, “Claude Sonnet 4 agent”).

- Click Select a model and choose an Amazon Bedrock model such as

us.anthropic.claude-sonnet-4-20250514-v1:0. - Click Parameters and set

max_tokensto1024. - Add the agent instructions:

- Click + Attach tools and select

get_order_status.

- Click Review and save.

- On the Targeting tab, set the default rule to serve your variation and save.

To learn more about managing tools, read Tools in AI Configs.

Retrieve the agent config and run the tool loop

Use agent_config() instead of completion_config(). The SDK returns attached tools under parameters.tools in OpenAI’s type=function shape, so convert them to Bedrock Converse’s {toolSpec: {name, description, inputSchema: {json}}} shape and pass them on the toolConfig parameter. Tool handler functions stay in your application code: LaunchDarkly stores the schema, your application owns the behavior.

In completion mode, you can attach judges to variations in the LaunchDarkly UI for automatic evaluation. In agent mode, invoke judges programmatically through the AI SDK.

To learn more, read When to use prompt-based vs agent mode and Agents in AI Configs.

Step 7: Monitor your AI Config

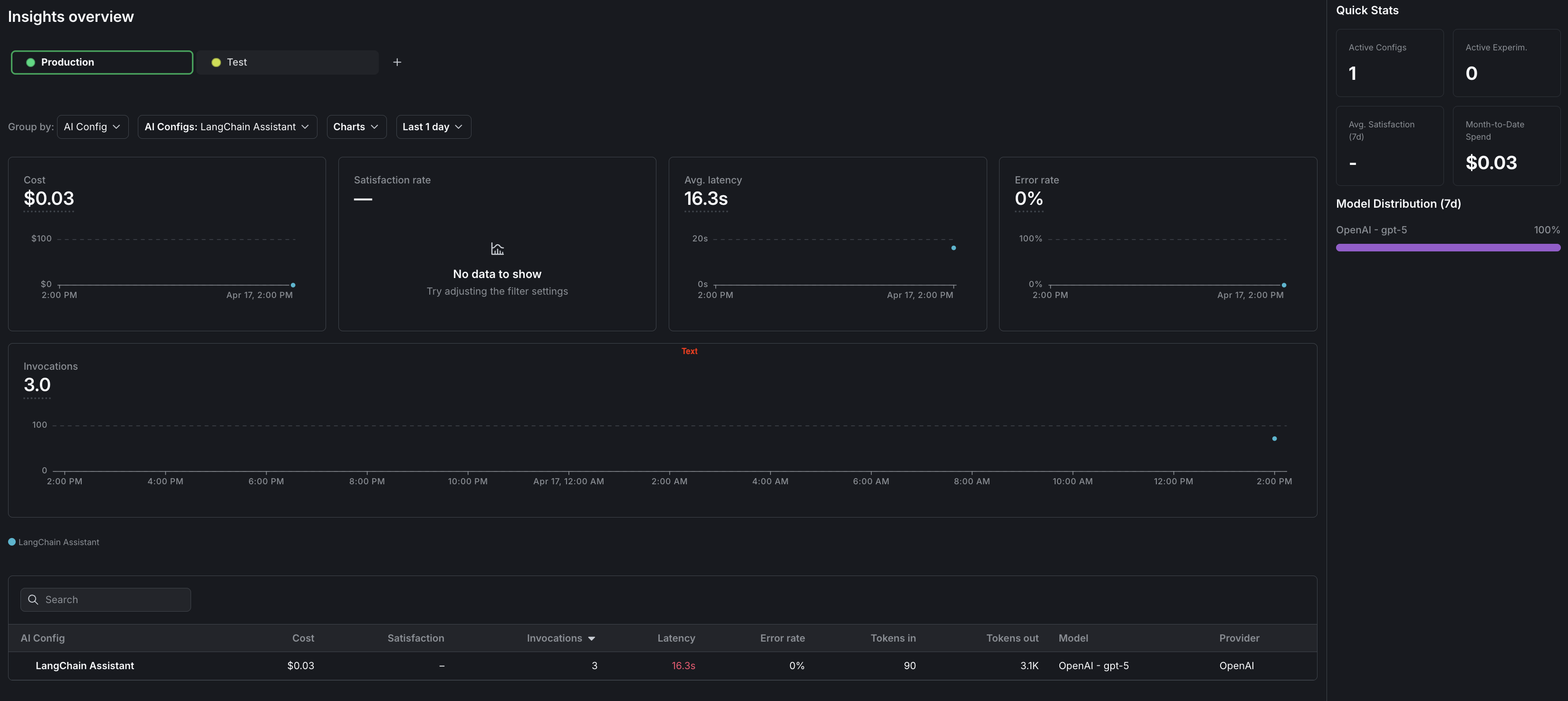

Use the LaunchDarkly UI to monitor how your applications are performing across all AI Configs and for individual configs.

To view aggregated metrics across all your AI Configs, navigate to Insights in the left navigation under the AI section. The Insights overview page displays cost, latency, error rate, invocation counts, and model distribution across your organization. To learn more, read AI Insights.

To view metrics for a specific AI Config:

- Navigate to your AI Config.

- Select the Monitoring tab.

The dashboard displays the following metrics:

- Generation count: The total number of AI generation calls tracked for this config.

- Input and output tokens: Token consumption broken down by prompt tokens sent and completion tokens received.

- Latency: The time taken for each generation call, shown as percentiles (p50, p95).

- Success and error rates: The proportion of successful versus failed generation calls.

Metrics update approximately every minute. Use these metrics to compare variations and optimize your prompts. To learn more, read Monitor AI Configs.

Observability

The AI SDKs emit OpenTelemetry-compatible spans for each generation call. You can forward these spans to your existing observability stack for deeper analysis. To learn more, read Observability and LLM observability.

Step 8: Close the client

Close the LaunchDarkly client when your application shuts down to flush pending events.

Here is how to close the client:

For short-lived applications such as scripts, explicitly flush events before closing.

Here is how to flush events:

Complete example

Here is a complete working example that combines all the steps.

Click to expand full example code

What to explore next

After you have the basic integration working, you can extend it with:

- Tools for calling external functions from your workflows

- Online evaluations to score response quality automatically

- Experiments to compare AI Config variations statistically

- Agents for multi-step workflows

For more AI Configs guides, read the other guides in the AI Configs guides section.

Troubleshooting

If you are experiencing problems with your configuration, this section lists common errors and solutions.

Metrics not appearing

If metrics do not appear on the AI Insights dashboard:

- Verify that you are calling the tracker methods (

track_bedrock_converse_metricsin Python,trackBedrockConverseMetricsin Node.js). - Ensure you call

flush()before closing the client, especially for short-lived scripts. - Wait at least one minute for metrics to process.

SDK initialization failures

If the LaunchDarkly SDK fails to initialize:

- Verify your SDK key is correct and matches the environment you are targeting.

- Check that your network can reach LaunchDarkly servers.

- Review the SDK logs for specific error messages.

Config returns fallback value

If you always receive the fallback configuration:

- Verify targeting is enabled for your AI Config.

- Check that the AI Config key in your code matches the key in LaunchDarkly.

- Ensure your context matches the targeting rules.

Amazon Bedrock errors

If you receive Amazon Bedrock API errors:

- Verify your AWS credentials are set correctly and have the

bedrock-runtime:Conversepermission. - Confirm model access is granted for the model ID referenced in your AI Config.

- Ensure the model ID in your AI Config matches an available Amazon Bedrock model in your region.

Conclusion

In this guide, you connected an Amazon Bedrock-powered application to LaunchDarkly AI Configs. You can now:

- Manage prompts and model settings in LaunchDarkly without code changes

- Track token usage, latency, and success rates automatically

- Use template variables to customize prompts per user

Want to know more? Start a trial.

Your 14-day trial begins as soon as you sign up. Get started in minutes using the in-app Quickstart. You'll discover how easy it is to release, monitor, and optimize your software.Want to try it out? Start a trial.