Release testing is a non-negotiable phase in software development that guarantees new features and updates are ready for users. This quick explainer to release testing breaks down the essential terms, methods, and best practices in release testing to help you get started.

Whether you're new to software development or looking to improve your release strategy, this overview will go you the know-how and tips to implement effective release testing in your projects.

What is release testing?

Release testing refers to coding practices and test strategies designed to identify and eliminate errors and bugs before the software reaches end-users. It focuses on a software release candidate—a snapshot of the code baseline that includes all new features and bug fixes intended for the upcoming release. This candidate is typically packaged and labeled as "production-ready," but it's the job of release testing to verify this claim.

Release testing comprises all the development and testing activities that ensure a software release candidate is ready for users.

The primary objectives of release testing are to:

- Build confidence in the release candidate

- Identify potential issues in a controlled environment

- Guarantee the software meets quality standards and user expectations

Release testing is not a single, monolithic process but rather a comprehensive strategy that incorporates various testing methods. It's an approach that aims to "break" the system in a controlled environment, allowing development teams to focus their quality assurance efforts on the release candidate.

Finding flaws before the software reaches end users gives your teams time to address issues proactively and release more stable and reliable products.

Common release testing techniques and methods

Release testing encompasses a range of techniques and methods. Each approach serves a specific purpose in the testing process and contributes to the overall reliability and performance of the software. Here are some key techniques and methods used in release testing:

- Quality Assurance (QA): The systematic process of checking whether a product or service meets specified requirements. QA in software development involves various testing methods to double-check the software meets quality standards before release.

- Regression Testing: The process of retesting existing software functionalities to guarantee that new code changes haven't adversely affected previously working features. This type of testing helps maintain the stability of the software across updates.

- User Acceptance Testing (UAT): A phase of testing where actual users or clients test the software to verify it can handle required tasks in real-world scenarios. UAT is typically one of the final stages of testing before software release.

- Functional Testing: The process of testing individual functions or features of a software application. It checks that each function operates according to the specified requirements and produces the expected output.

- Performance Testing: A type of testing to determine how a system performs under a particular workload. It helps identify and eliminate performance bottlenecks in the software application.

- Integration Testing: The phase where individual software modules are combined and tested as a group. It aims to expose faults in the interaction between integrated units.

- Automated Testing: The use of special software tools to execute tests and compare actual outcomes with predicted outcomes. Automated testing significantly speeds up the testing process and improves test coverage.

- Test Cases: Specific sets of conditions under which a tester will determine whether an application or software system is working correctly. Well-designed test cases make for more effective testing.

- Defect Tracking: The process of reporting, tracking, and managing bugs or issues found during testing. Defect tracking helps check that all identified problems are addressed before release.

How to get started with release testing

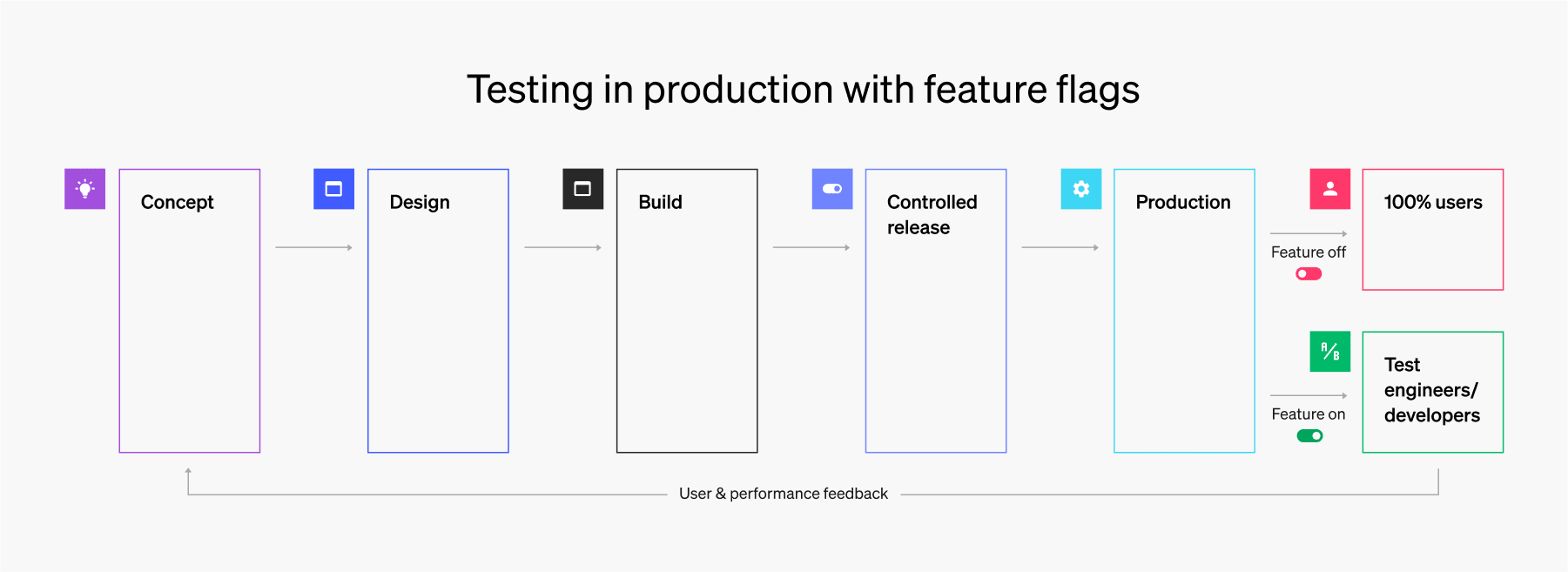

Modern release testing (particularly testing in production) provides valuable insights into real-world performance. This means testing new functionality on a live production server. Conventionally, developers have perceived testing in production as a risky practice. However, CI/CD principles and feature flags reduce such risk.

Here's how to get started with release testing (the right way):

- Understand testing in production: Evaluate new functionality on live servers for accurate, real-world insights. Mitigate risks through modern practices and tools.

- Leverage CI/CD principles: Test small, incremental changes and implement automated testing and deployment pipelines.

- Implement feature flags: Control access to new functionality and enable quick disabling of problematic features without restarts.

- Adopt progressive rollout strategies: Use canary or dark launches for limited initial exposure. Gradually increase user access through percentage rollouts or targeted deployments.

- Monitor and analyze: Track system performance metrics closely. Gather and analyze user feedback and usage data.

- Be ready to roll back: Have a plan for quick feature disabling or rollback. Learn from issues to improve future releases.

Testing in production gives developers much better system performance and user engagement data earlier in the release process than pre-production testing. Plus, it allows developers to save time and energy on maintaining pre-production test and staging environments.

Try it for yourself. Start your free full-access LaunchDarkly 14-day trial to get access to feature flags, context-aware targeting, experimentation, and more.