This topic explains how to manage guarded rollouts.

All LaunchDarkly accounts include a limited trial of guarded rollouts. Use this to evaluate the feature in real-world releases.

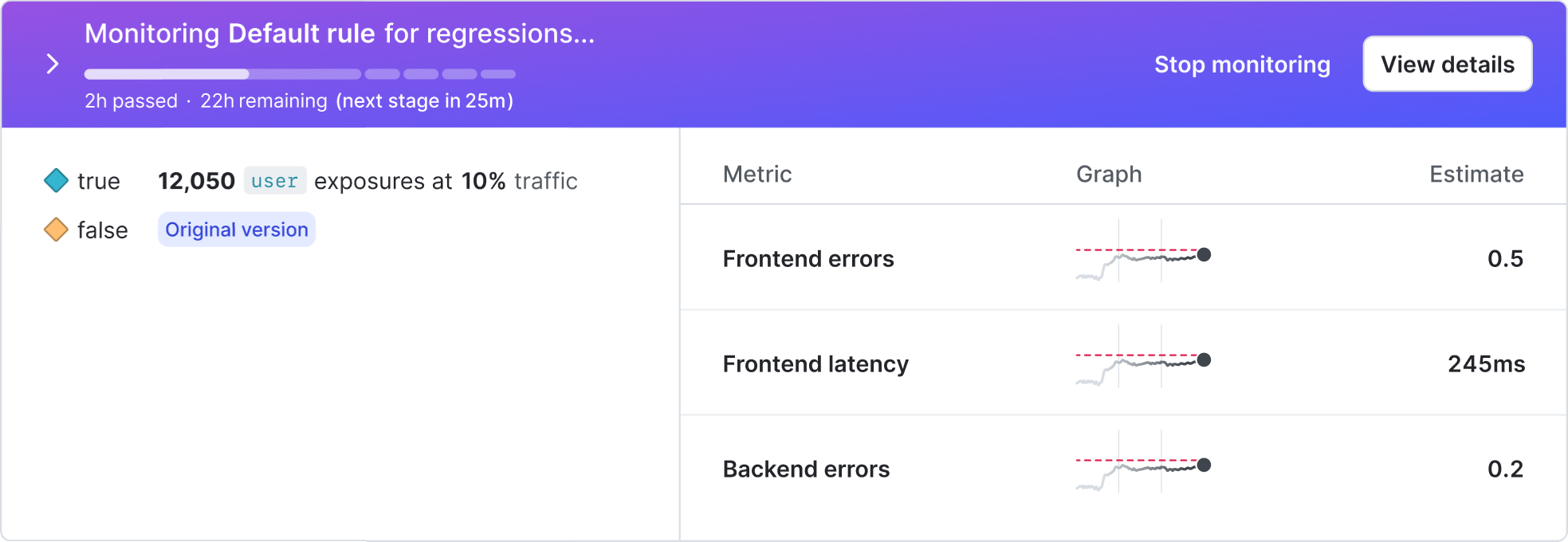

You can monitor a guarded rollout on a flag’s Monitoring tab. The Monitoring tab shows the rollout progression, how many contexts have been served the new variation, and how each metric is performing.

From the Monitoring tab, you can:

In a guarded rollout, each metric appears in its own tile. Each tile contains a difference chart.

Each metric tile includes:

On the Targeting tab, metric tiles appear in up to three columns in a single row. On the Monitoring tab, each metric appears on its own tab. Hover over a chart to view the new and original variation estimates, the confidence interval, and the number of contexts served at that point in time.

Difference charts are available only for guarded rollouts. Progressive rollouts and experiments use other visualization methods.

In January 2026, LaunchDarkly began using sequential testing to evaluate mean and percentile metrics in guarded rollouts.

If all data points in a rollout were collected after this change, including for newly created guarded rollouts, LaunchDarkly displays results using this frequentist sequential testing approach.

If any data points in the rollout were collected before this change, LaunchDarkly displays results using the original Bayesian method. In that legacy method, results were based on estimating whether the new variation performed worse than the original variation using a probability-based model.

As a result, metric behavior and hover details may differ across rollouts depending on when the underlying data was recorded.

During a guarded rollout, LaunchDarkly recalculates metric results on a regular schedule called the interval of evaluation.

At each interval, LaunchDarkly processes recent metric events, recalculates the absolute difference between the new and original variations, and evaluates whether the result is statistically significant using sequential testing. These evaluations occur roughly every minute. If metric events arrive more frequently, LaunchDarkly aggregates them into the next interval.

These regular updates ensure that the monitoring charts reflect the most recent metric data throughout the rollout.

Each guarded rollout has a monitoring window that spans the full rollout duration. LaunchDarkly uses all metric data collected within that window to evaluate performance.

If performance degrades late in the rollout, LaunchDarkly weighs that new data against earlier data. Larger degradations are detected more quickly, while smaller changes may require additional data before statistical significance is reached. To improve responsiveness, use shorter stage durations or metrics that emit data more frequently.

New variation estimates are point estimates of the metric value for the new variation. A point estimate uses sample data to calculate a single value that approximates the metric’s true value.

For metrics that use a percentile analysis method, such as latency at the 99th percentile, the estimate of new variation cell shows the estimated percentile value for contexts served the new variation.

For metrics that use the average analysis method, the estimate of new variation cell shows the average metric value for contexts served the new variation.

The difference from the original variation measures how much the metric value for the new variation differs from the original variation.

LaunchDarkly calculates this value as the absolute difference between the two variations using the metric’s unit:

For binary metrics, such as conversion rate or error rate, absolute difference is expressed in percentage points (pp). Percentage points represent the arithmetic difference between two percentage values.

The confidence interval below the difference shows the range of values within which the true difference is likely to fall across repeated measurements.

When sequential testing determines that the absolute difference is statistically significant and indicates a negative impact based on the metric’s success criteria, LaunchDarkly identifies a regression.

To manually roll back a release after LaunchDarkly has detected a regression:

If you are using a guarded rollout on a prerequisite flag and you roll back the change, LaunchDarkly will not also roll back any changes on the dependent flags. You must roll back changes on dependent flags separately.

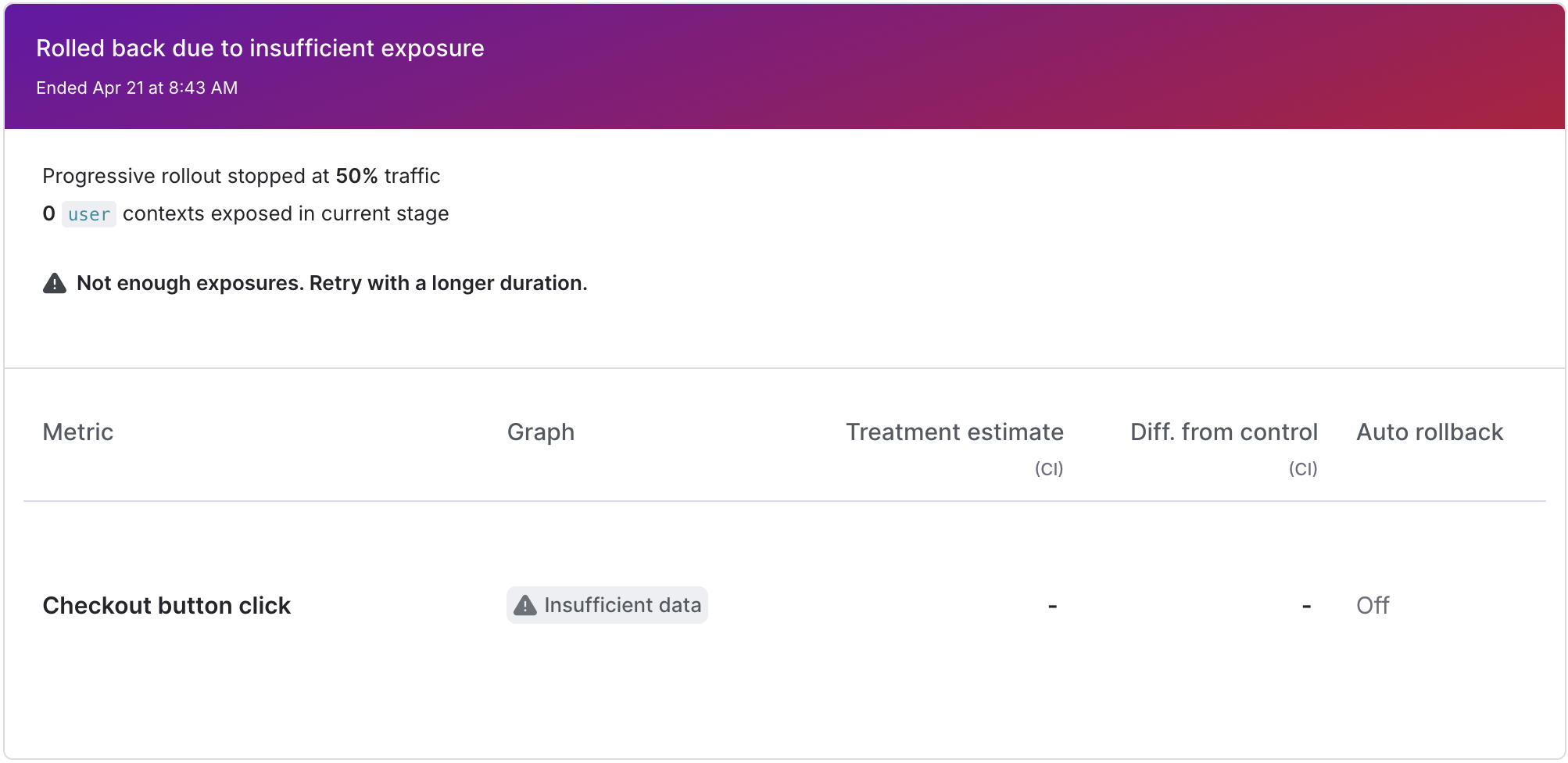

LaunchDarkly automatically rolls back a guarded rollout in these scenarios:

When LaunchDarkly automatically rolls back a rollout, it sends an email and, if you use the LaunchDarkly Slack or Microsoft Teams app, a Slack or Teams notification.

If you are using a guarded rollout on a prerequisite flag and LaunchDarkly automatically rolls back the change, it will not also roll back any changes on the dependent flags. You must roll back changes on dependent flags separately.

If LaunchDarkly detects a regression on a metric but you want to continue with the release, you can dismiss the regression alert for that metric. You can have multiple regressions on the same rollout if you have more than one metric attached to the rollout.

To dismiss an alert:

If LaunchDarkly detects another regression, you will receive another alert. If LaunchDarkly does not detect any additional regressions, the release will continue.

To stop monitoring before the monitoring window is over:

You can also use the REST API: Update feature flag