BigQuery native Experimentation

Contact us for help with configuring BigQuery native Experimentation

If you have both Data Export and Experimentation enabled for your LaunchDarkly account, you should have access to BigQuery native Experimentation. If you do not have access or need help getting started, contact your LaunchDarkly representative or start a Support ticket.

Overview

This topic explains how to set up the BigQuery native Experimentation integration. BigQuery native Experimentation enables you to run experiments in LaunchDarkly using data directly from your Google Cloud BigQuery data warehouse. This lets LaunchDarkly experiments read and analyze your metrics data stored in BigQuery, without requiring you to send event data through LaunchDarkly’s SDKs.

Prerequisites

Before setting up BigQuery native Experimentation, ensure that you have:

- The BigQuery Data Export integration set up in LaunchDarkly for your selected project and environment.

- A Google Cloud Platform (GCP) project with BigQuery enabled.

- Permission to create service accounts, datasets, and IAM bindings in your GCP project.

- Access to Google Cloud Shell, or the

gcloudCLI andbqCLI installed locally. - A LaunchDarkly role of Owner or Admin, or a custom role that allows the following actions:

- Metric event data in your BigQuery project that you want to use for Experimentation.

Step 1: Gather your BigQuery project information

To begin, collect the following information about your Google Cloud project:

- GCP project ID: Available in the GCP Console, under the top project dropdown, labeled Project ID. This is the Google Cloud project that holds your BigQuery resources. Legacy domain-scoped project IDs are also supported.

- Dataset location: Available in BigQuery under your dataset labeled Dataset info. This is the BigQuery region where your datasets are located, for example

US,us-central1, orasia-south1. All datasets must be in the same location. You cannot change this after saving. - Results dataset ID: A name for the new dataset where LaunchDarkly will write computed experiment results. Use only letters, numbers, and underscores, for example

ld_experimentation_results. The setup script will create this dataset for you. - Data Export dataset ID: Available in BigQuery under your Data Export destination dataset. This is the dataset where your BigQuery Data Export destination writes evaluation events. This is the same dataset you configured in Data Export, for example

ld_data_export. - Metrics dataset ID (optional): Available in BigQuery under your metrics dataset. This is the dataset containing your metric events table. If you omit this, the Google Cloud service account will have the BigQuery Data Viewer IAM role on your GCP project, rather than only on the metrics dataset. You cannot change this after saving.

Step 2: Configure the LaunchDarkly integration

Now, you will configure the BigQuery native Experimentation integration in LaunchDarkly. This integration is a second LaunchDarkly integration that looks similar to the BigQuery Data Export integration, but requires a separate setup process.

To set up the integration:

- Navigate to the Integrations page.

- Search for and select BigQuery Native Experimentation.

- Click Add integration. The configuration panel appears.

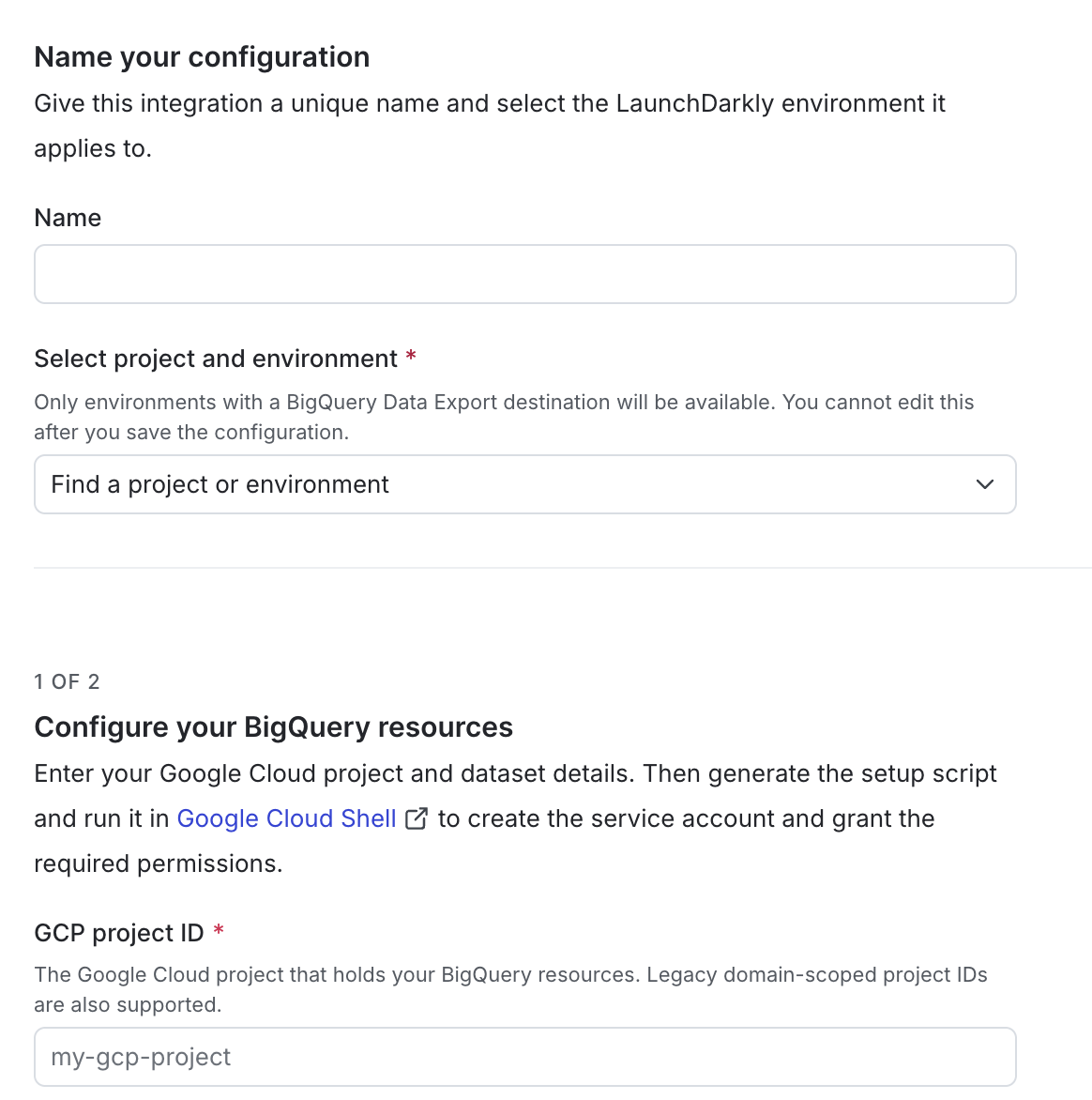

- Enter an integration Name.

- Select the LaunchDarkly Project and environment for this integration. Only environments with a BigQuery Data Export destination are available.

- Enter your GCP project ID.

- Select your Dataset location.

- Enter your Results dataset ID.

- Enter your Data Export dataset ID. This is the same dataset you configured when setting up BigQuery Data Export.

- (Optional) Enter your Metrics dataset ID.

- Click Generate setup script. LaunchDarkly generates a Google Cloud setup script. Copy and save this script for use in the next step.

Keep the configuration screen open, as you will return to it in a later step.

Step 3: Set up Google Cloud resources

LaunchDarkly needs a GCP service account with permissions to take actions in your GCP project. You can either run the setup script you generated in the last section, or complete the steps manually in the Google Cloud Console.

Option A: Run the setup script

Run the script using one of the following methods:

- Google Cloud Shell (no local install needed): Open the Cloud Shell editor terminal and paste the script from step 11 in the previous section.

- Local terminal: If you have

gcloudandbqinstalled locally, paste and run the script in your terminal.

After the script completes, run cat ld-experimentation-key.json to print the JSON key file contents, then copy them to use in Step 4.

Option B: Set up manually in the Google Cloud Console

If you prefer to use the Google Cloud Console UI instead of the script, expand the section below.

Expand Manual setup in the Google Cloud Console

Create the service account

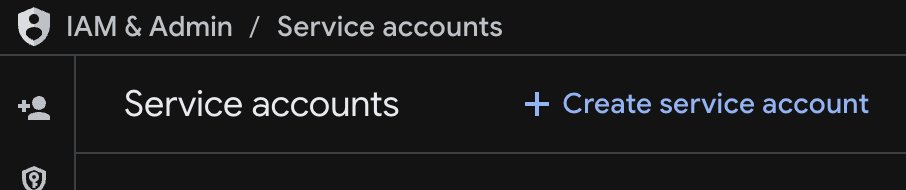

- In the Google Cloud Console, navigate to IAM & Admin > Service Accounts.

- Click Create service account.

- Enter a name that meets GCP naming requirements. For example,

launchdarkly-experimentation. - Click Done. Do not grant any roles at this step.

Create the results dataset

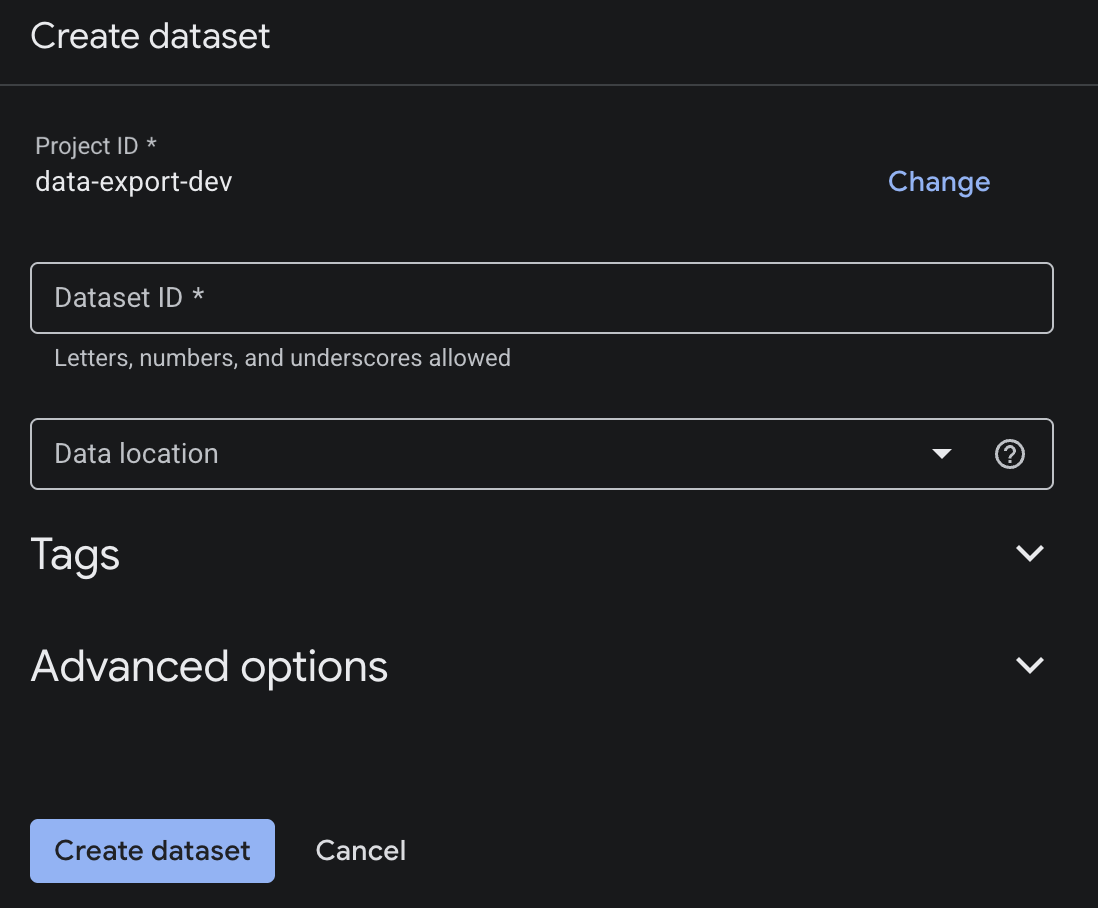

- In the Google Cloud Console, navigate to BigQuery.

- Select your project and click Create dataset.

- Enter your results dataset ID.

- Select the same location as your other datasets.

- Click Create dataset.

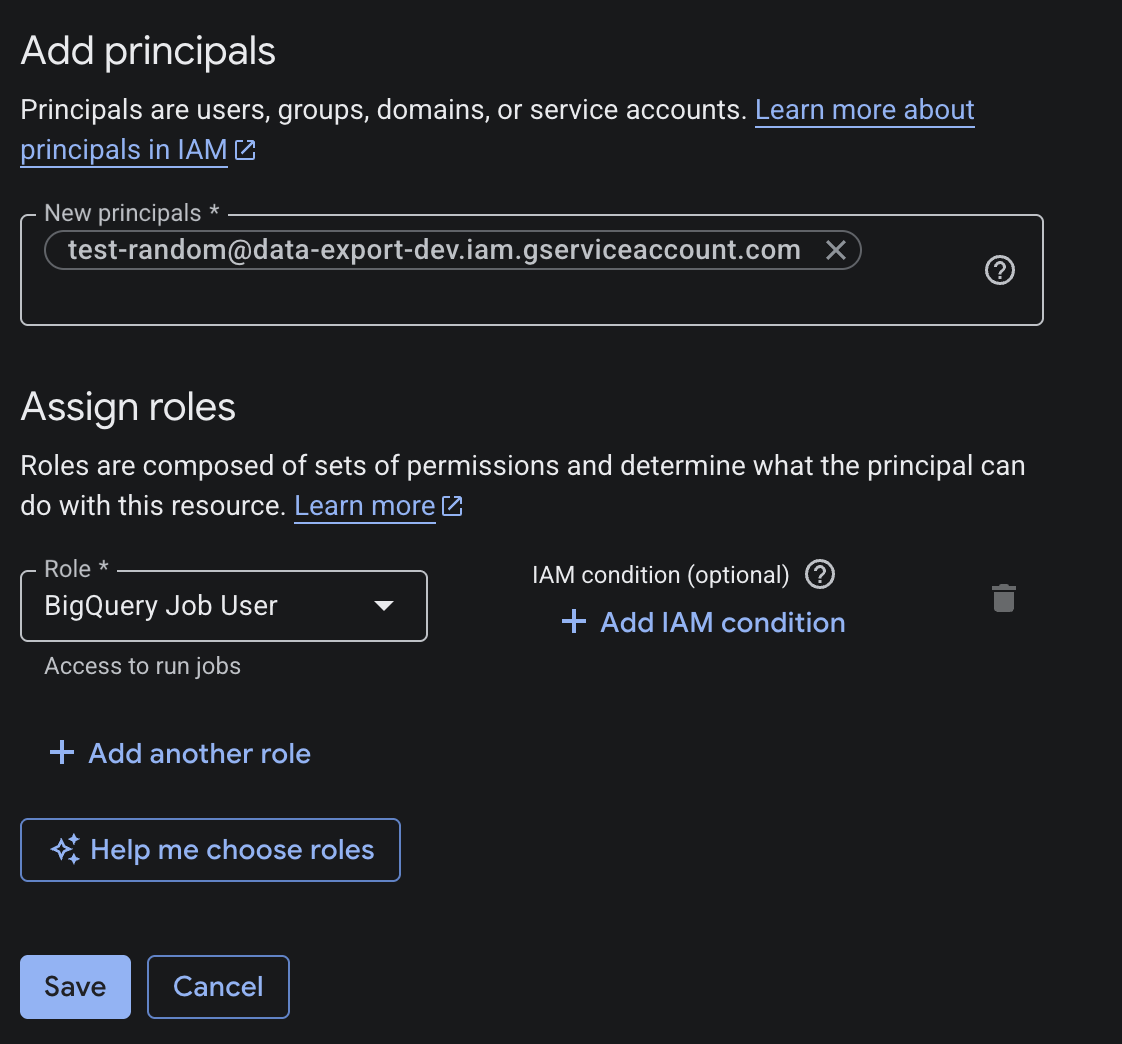

Grant the BigQuery Job User role at the GCP project level

- Navigate to IAM & Admin > IAM.

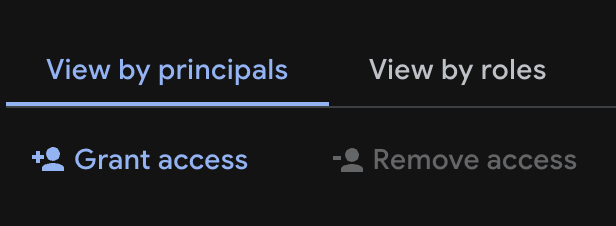

- Click Grant Access.

- Enter the full service account email, for example

YOUR_SA_NAME@YOUR_PROJECT_ID.iam.gserviceaccount.com. - Select the role BigQuery Job User.

- Click Save.

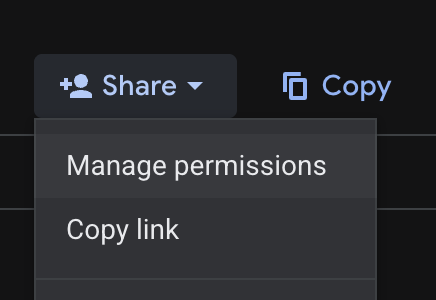

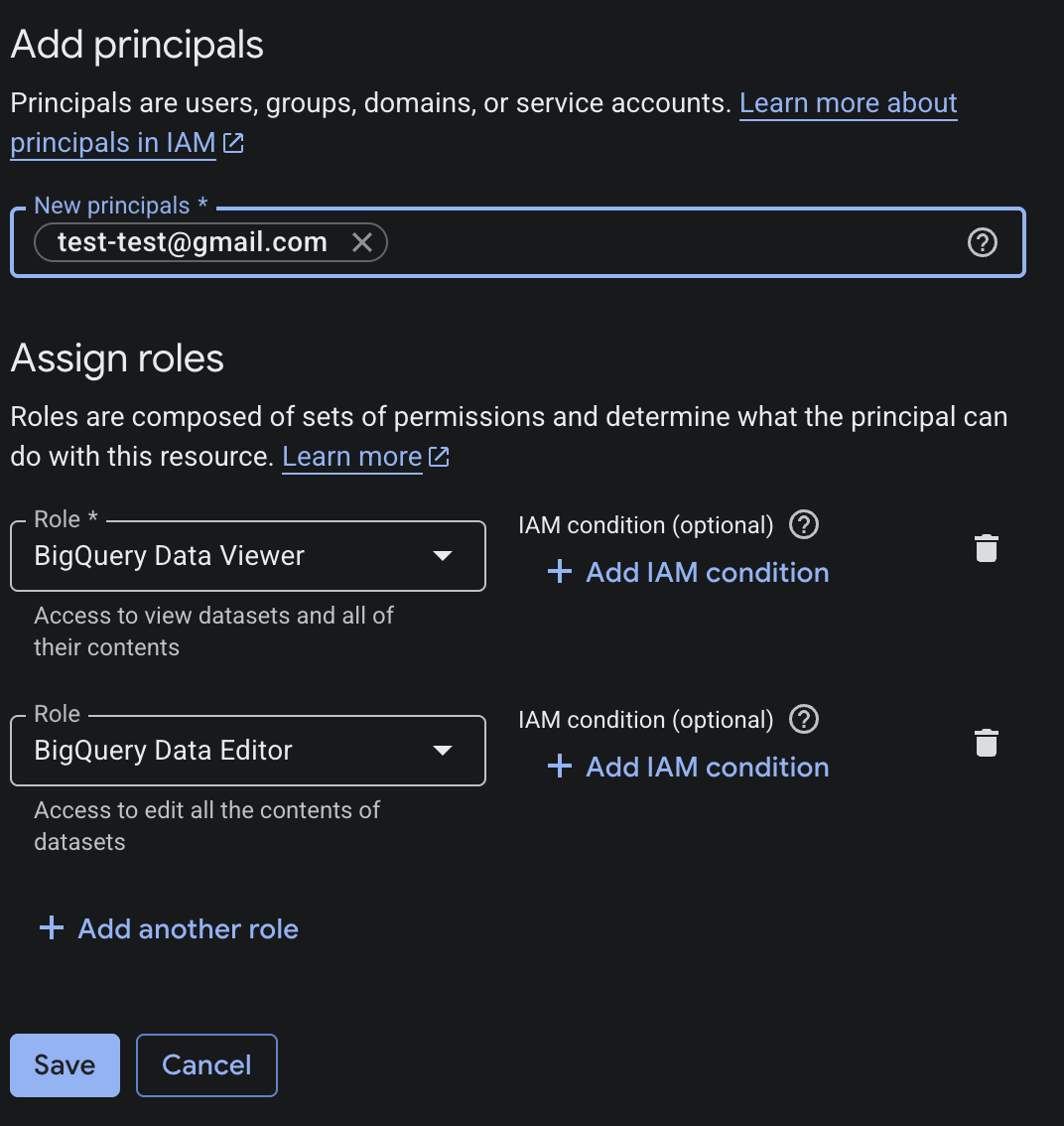

Grant dataset-level permissions

Grant the service account BigQuery Data Editor on the results dataset:

- In BigQuery, select the results dataset.

- Click Share > Manage permissions > Add Principal.

- Enter the service account email, select BigQuery Data Editor, and click Save.

Grant the service account BigQuery Data Viewer on the export dataset:

- In BigQuery, select the export dataset.

- Click Share > Manage permissions > Add Principal.

- Enter the service account email, select BigQuery Data Viewer, and click Save.

Grant read access for metrics

If you specified a metrics dataset:

- In BigQuery, select the metrics dataset.

- Click Share > Manage permissions > Add Principal.

- Enter the service account email, select BigQuery Data Viewer, and click Save.

If you did not specify a metrics dataset:

- Navigate to IAM & Admin > IAM.

- Click Grant Access.

- Enter the service account email, select BigQuery Data Viewer at the GCP project level, and click Save.

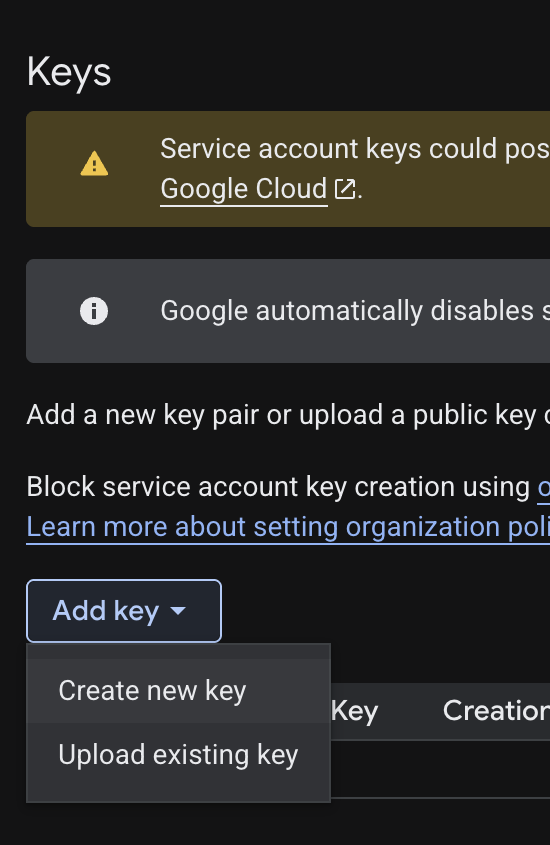

Create and download the JSON key

- Navigate to IAM & Admin > Service Accounts.

- Select your service account.

- Click the Keys tab > Add Key > Create new key.

- Select JSON and click Create. The browser downloads a key file.

Step 4: Add your service account key

After completing the setup process:

- Return to the LaunchDarkly configuration screen.

- Paste the full contents of the JSON key file you downloaded from Google Cloud into the Service account key (JSON) field.

- After reading the Integration Terms and Conditions, check the I have read and agree to the Integration Terms and Conditions checkbox.

- Click Save configuration.

Step 5: Verify the connection

Finally, verify the connection. On the integration configuration page, check the “Connection status” indicator. A green Healthy status confirms LaunchDarkly can connect to your BigQuery project and has the correct permissions.

If the connection is not healthy, verify that:

- You completed all setup steps without errors.

- The dataset location you entered matches the region your datasets are actually in.

- The Data Export destination and the BigQuery native Experimentation integration are configured for the same LaunchDarkly environment.

Next steps

After your integration is connected, complete the following steps to start running experiments:

- Create a metric data source: point LaunchDarkly at your metric events table so it can read conversion and numeric event data.

- Create metrics: define what you want to measure using your BigQuery data.

- Create an experiment: when setting up your experiment, select Warehouse native (BigQuery) as the data source.

To learn how to analyze your results, read Analyzing experiments.

The results page for a warehouse native experiment displays the date and time the results were last updated from BigQuery. The initial load of experiment results can take up to 60 minutes to appear, and further results updates appear within 15–30 minutes.