Several People are Launching: Feature Flags at Slack

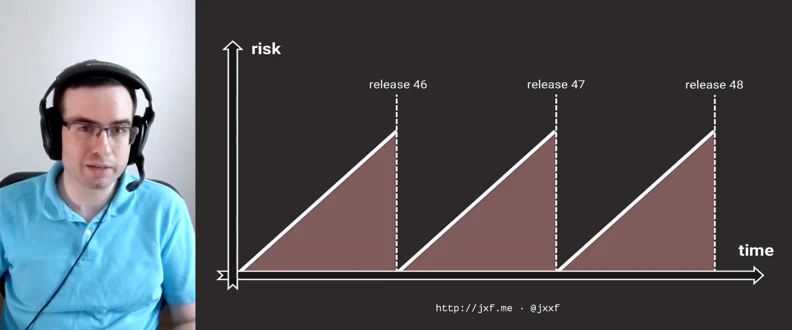

Feature flags have been an integral part of Slack’s code base from the very beginning. As we have grown as an engineering team and as a business, the power and complexity of our original feature flag engine grew to the point that it was beginning to consume more of our CPU resources than we would have liked without giving us the ability to iterate faster, especially as our system began to break apart from a single monolith into a collection of interrelated services. In this talk, we’ll discuss the history of feature flags at Slack, some of the problems we encountered and overcome with our original configuration engine, and how we have rewritten both the core engine and our configuration deployment system to allow us to move both faster AND safer than ever before.

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.