I Love Feature Flags!

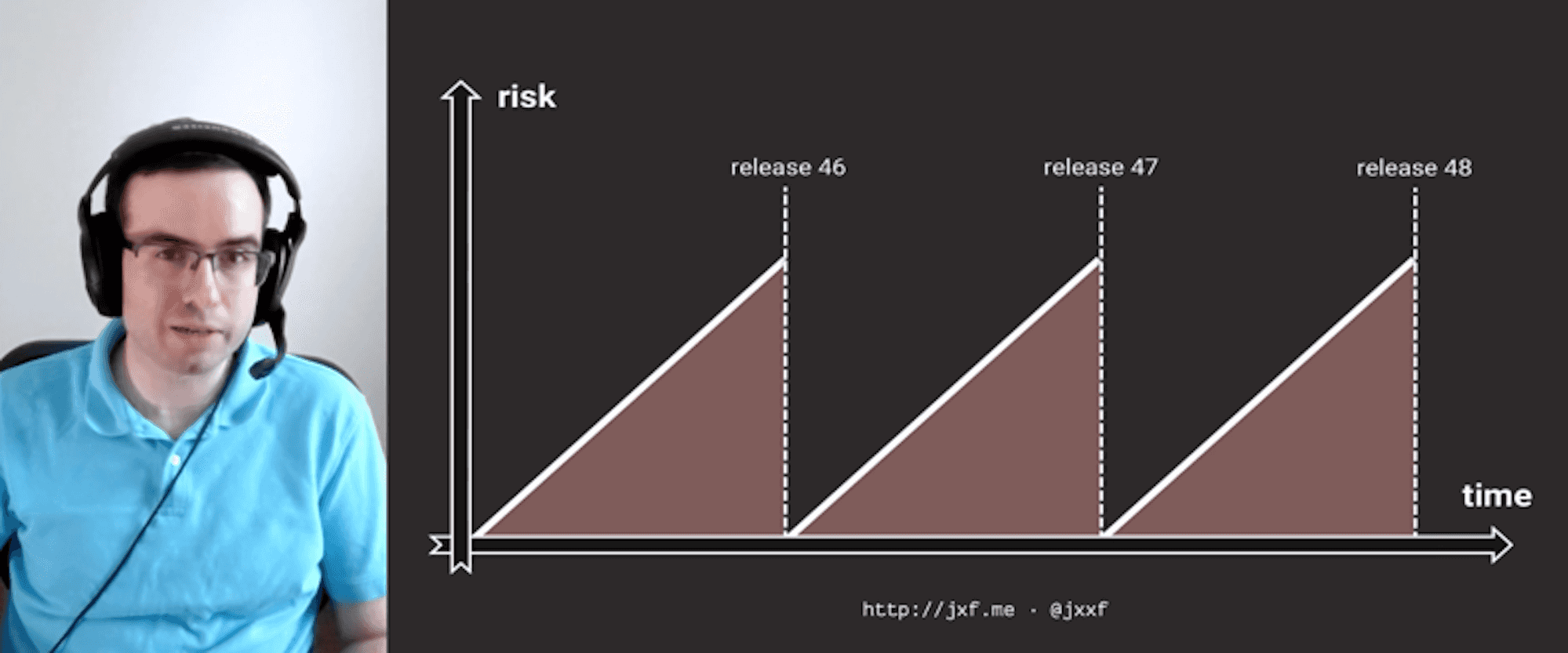

"I love feature flags!" is a quote from Jason McGee, the VP overseeing IBM Kubernetes Service. This single post in Slack is when the IBM Kubernetes Service team knew they had fundamentally changed how they build, deliver, and operate the IBM Kubernetes Service. Since its inception three years ago, the IBM Kubernetes Service has been a leader in IBM by pushing the boundaries for tools and culture. It has evolved from a cumbersome monolith to a true cloud-native service that manages over 20,000 Kubernetes Clusters. They have embraced feature flags, initially for managing deployments, and now for the progressive delivery of new features and operational regions. This talk will cover the team's journey over the past three years, things they did wrong, things they did right, and where they want to go in the future.

Trajectory related videos

Keynote: Product Vision

Trajectory

Flagging the Jamstack - Using Feature Flags with Netlify - W...

Trajectory

Flight School - Workshop

Trajectory

Keynote: State of Feature Management

Trajectory

Keynote: Fireside chat with Gene Kim

Trajectory

State of LaunchDarkly Experimentation

Trajectory

Product Updates - Introducing Custom Contexts, Accelerate an...

Trajectory

LaunchDarkly Architecture - Releasing Features at Global Sca...

Trajectory

General Motors' Journey to Feature Flags with LaunchDarkly

Trajectory

Vodafone Fireside Chat with Jessica Cregg

Trajectory

Incidents: The customer empathy workshop you never wanted

Trajectory

%2BUnlocking%2Bthe%2BMonolith%2B-%2BLessons%2BLearned%2Bfrom%2BNaviance_s%2BJourney.png)

Unlocking the Monolith: Lessons Learned from Naviance's Jour...

Trajectory

Shifting Cloud Native Observability to the Left

Trajectory

How Chronosphere releases features at massive cloud native s...

Trajectory

%2BThe%2BJourney%2Bwith%2BLaunchDarkly%2Bat%2BOne%2BMedical.png)

The Journey with LaunchDarkly at One Medical

Trajectory

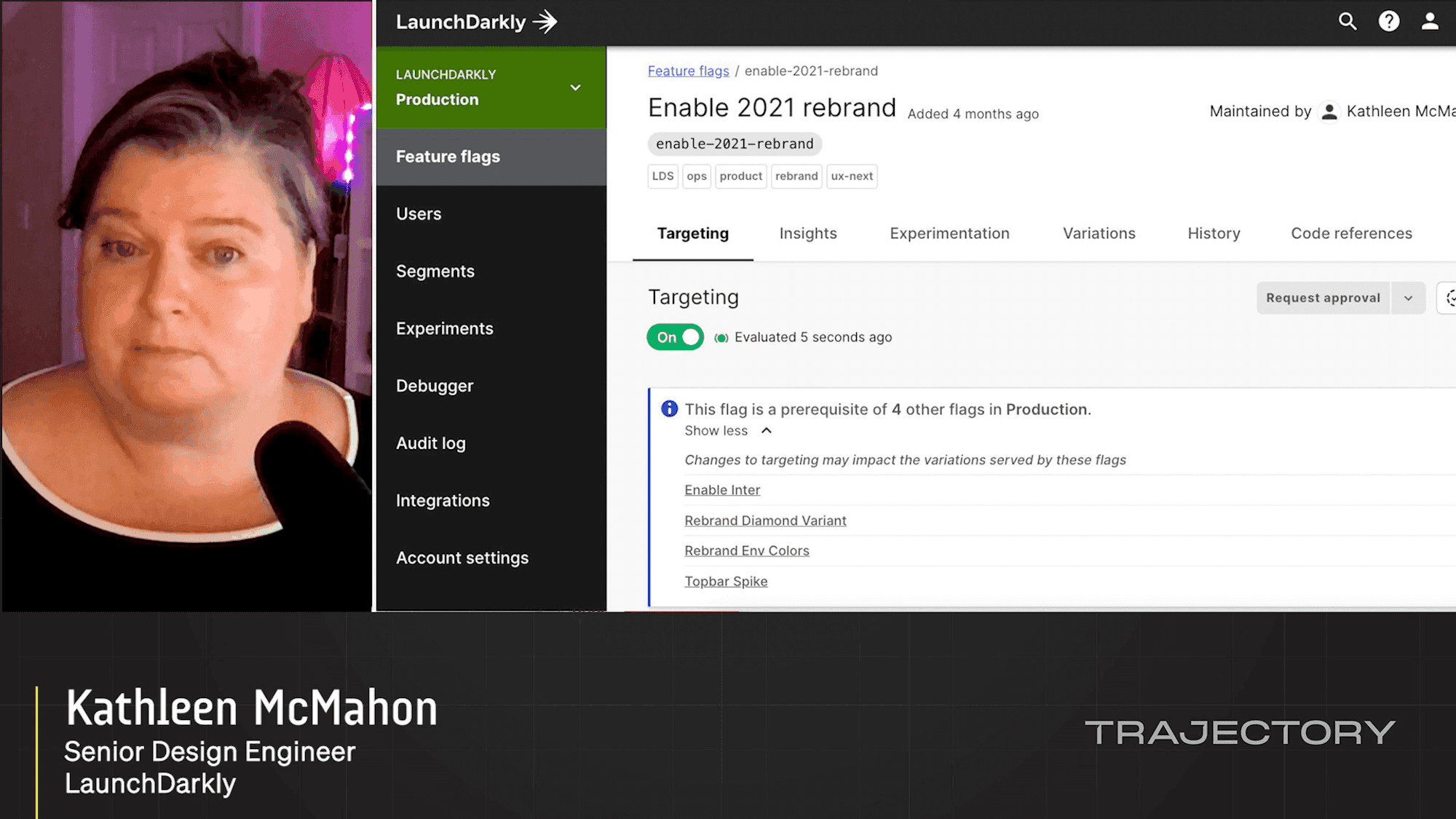

How LaunchDarkly Uses LaunchDarkly

Trajectory

Scaling LaunchDarkly to Teams of Teams

Trajectory

Feature Management For Product Managers and More

Trajectory

Use Feature Flags to Avoid Downtime During Migrations

Trajectory

Automating Progressive Delivery

Trajectory

Developer's Guide to Experimentation with LaunchDarkly

Trajectory

The Power of Targeting Via Attributes

Trajectory

Building Dynamic Configuration into Terraform

Trajectory

Using Observability to Accelerate Continuous Integration

Trajectory

5 Open Source Security Tools All Developers Should Know Abou...

Trajectory

Shift the feedback cycle left with Feature Flags and Cloud D...

Trajectory

Fireside chat with Edith Harbaugh, LaunchDarkly CEO & Co-Fou...

Trajectory

Partner Summit: What to Expect When Co-selling with LaunchDa...

Trajectory

Partner Summit: Build feature-led development solutions with...

Trajectory

LaunchDarkly Product Demo

Trajectory

Partner Summit: LaunchDarkly Partner Program Benefits

Trajectory

I Love Feature Flags!

Trajectory

5 Real Ways to Use Feature Flags Across Product, Marketing, ...

Trajectory

Doing Deployments at Scale

Trajectory

Clear Skies in the Cloud: Safer, Faster App Modernization wi...

Trajectory

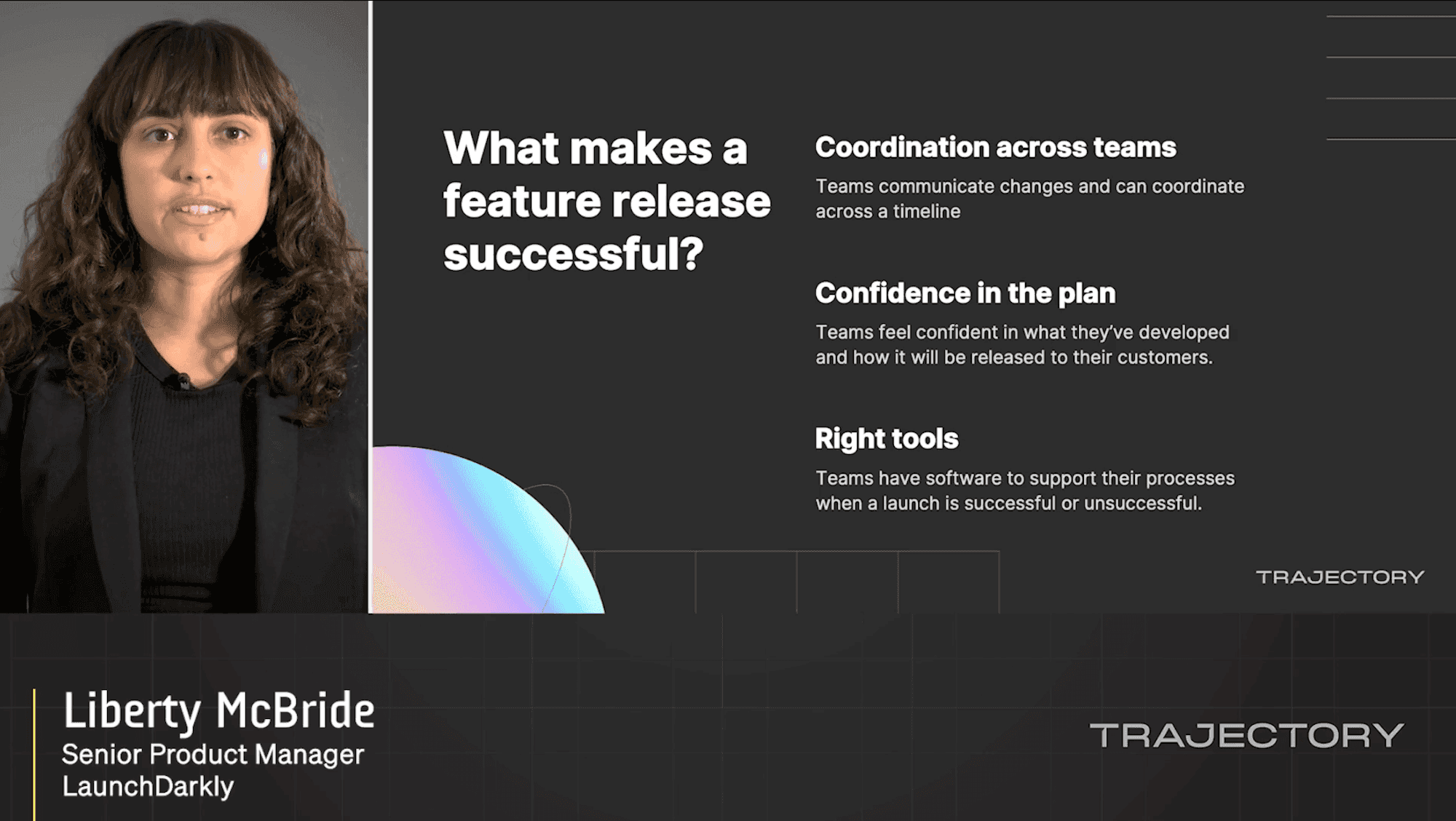

Product Deep Dives: Liberty McBride, Brandon Mensing, and Ro...

Trajectory

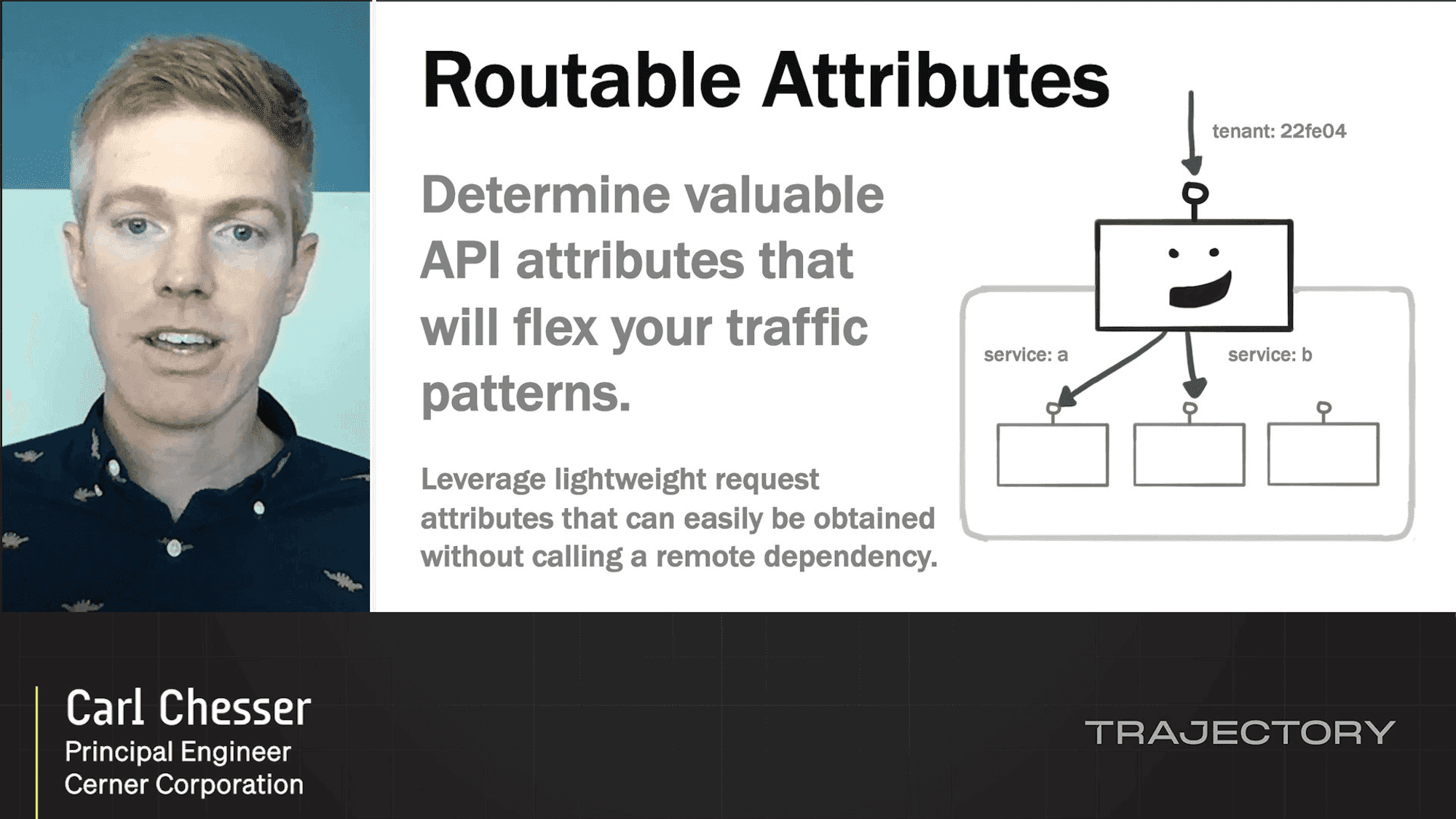

Learn in Production with Traffic Management

Trajectory

A Tale of Three Digital Transformations

Trajectory

Going From Zero to 100 Deploys a Day

Trajectory

Building LaunchDarkly at Scale with Self Service

Trajectory

Rolling Out LaunchDarkly Using LaunchDarkly

Trajectory

Rethink/Reimagine: Delivering Value in a Hybrid World

Trajectory

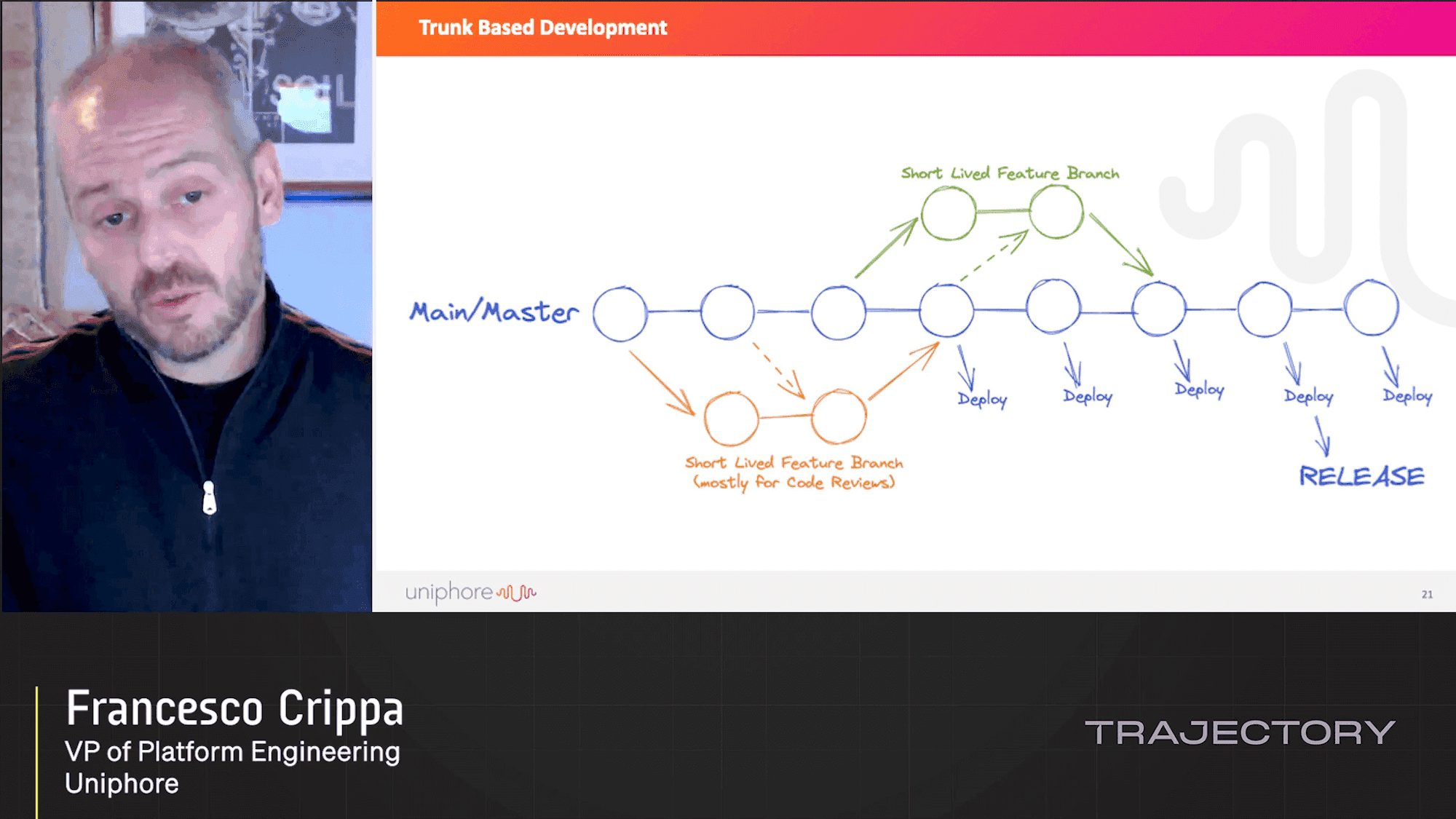

From Monolith to CI/CD

Trajectory

Game Dev Using LaunchDarkly

Trajectory

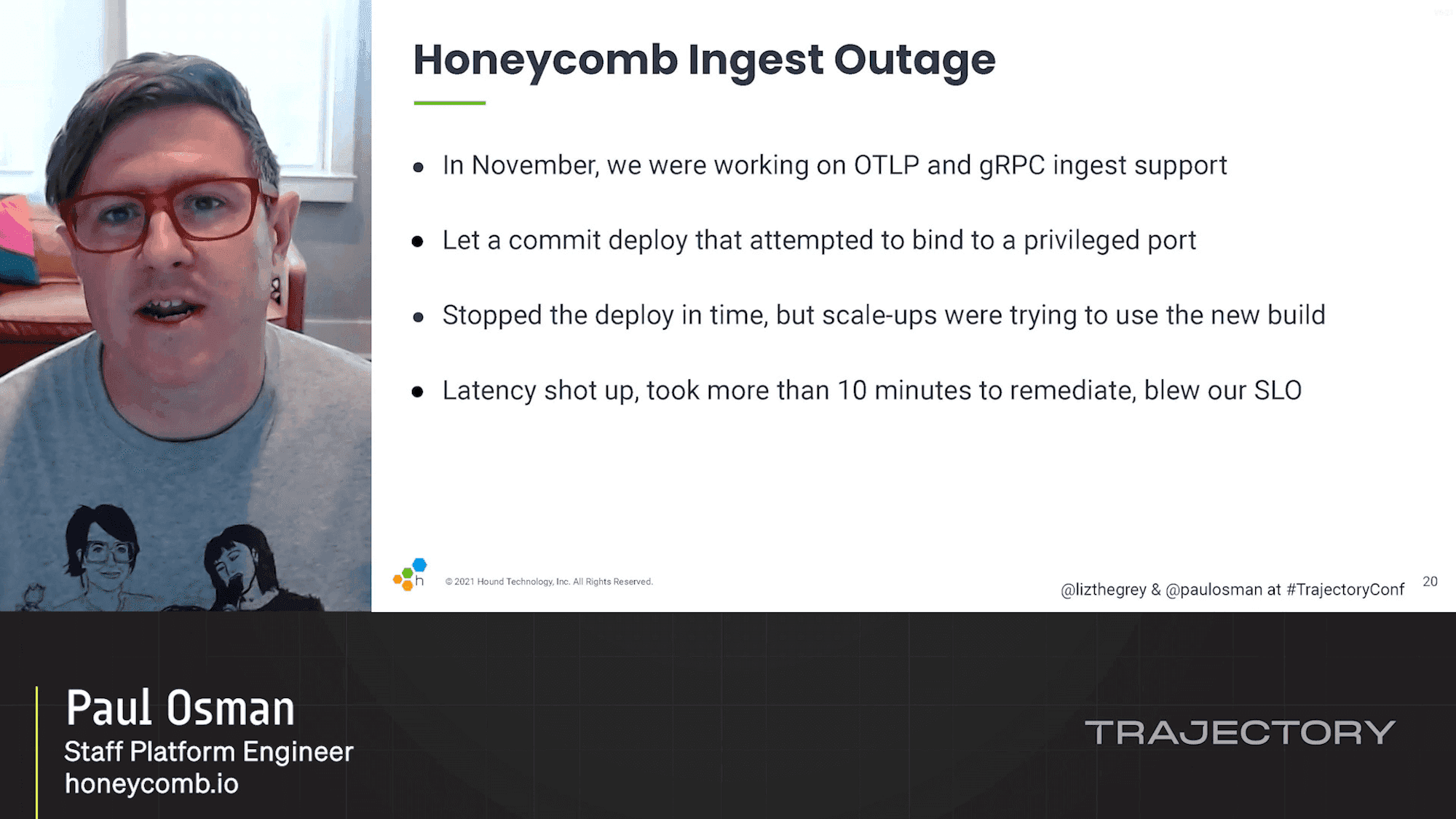

What To Do When You Blow Your Service Level Objectives

Trajectory

Fireside Chats: Edith Harbaugh & Chase Brammer

Trajectory

Project to Product: Measuring Digital Outcomes with the Flow...

Trajectory

FDD: Flag (and Test!) Driven Development

Trajectory

Fireside Chats: Edith Harbaugh & Ravi Upad

Trajectory

Kubernetes, You Rainbow-Infused Space Unicorn: What Leslie K...

Trajectory

Stealth-mode North Star: Rebranding in Secret with Feature F...

Trajectory

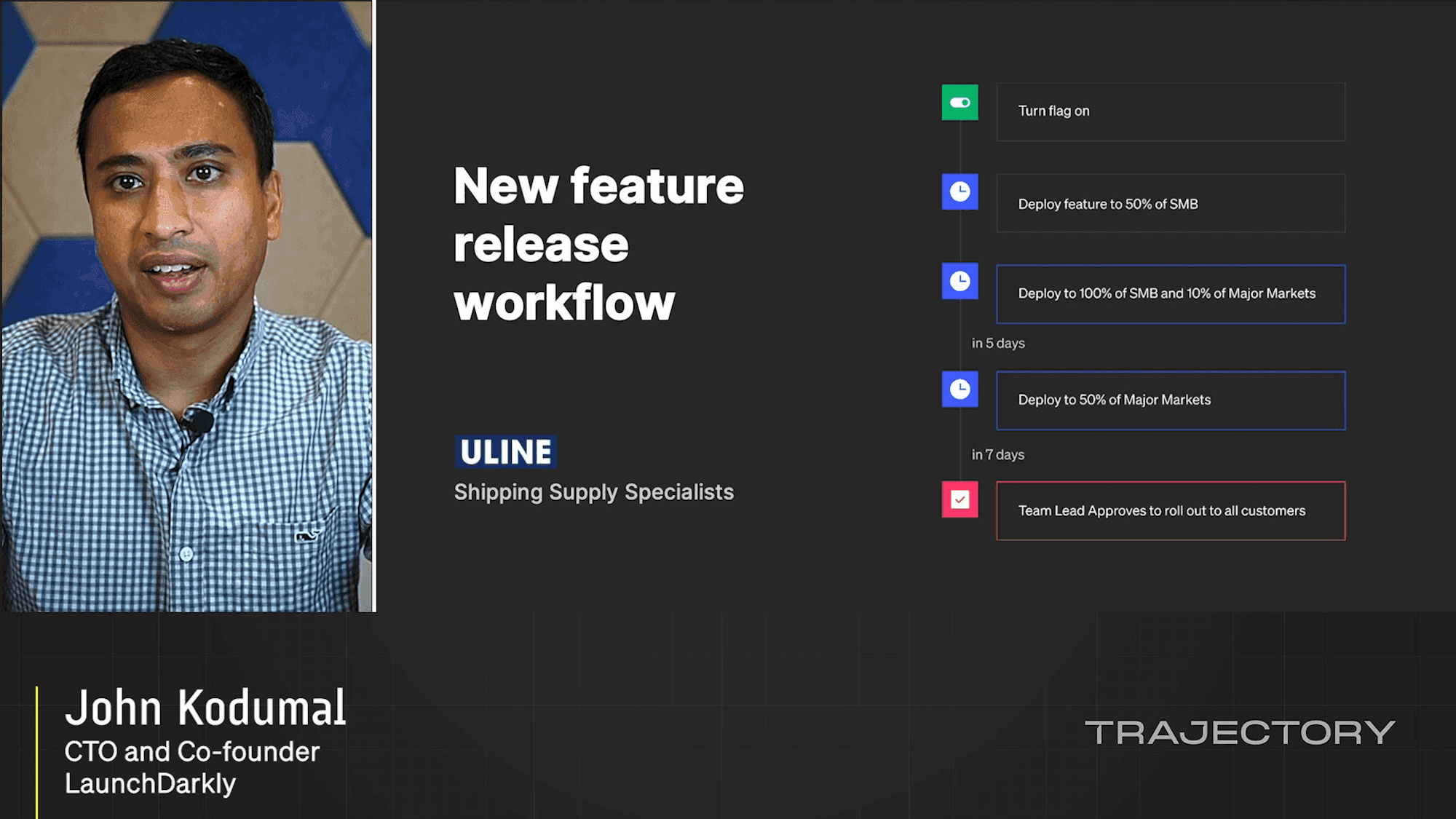

Product Keynote: John Kodumal

Trajectory

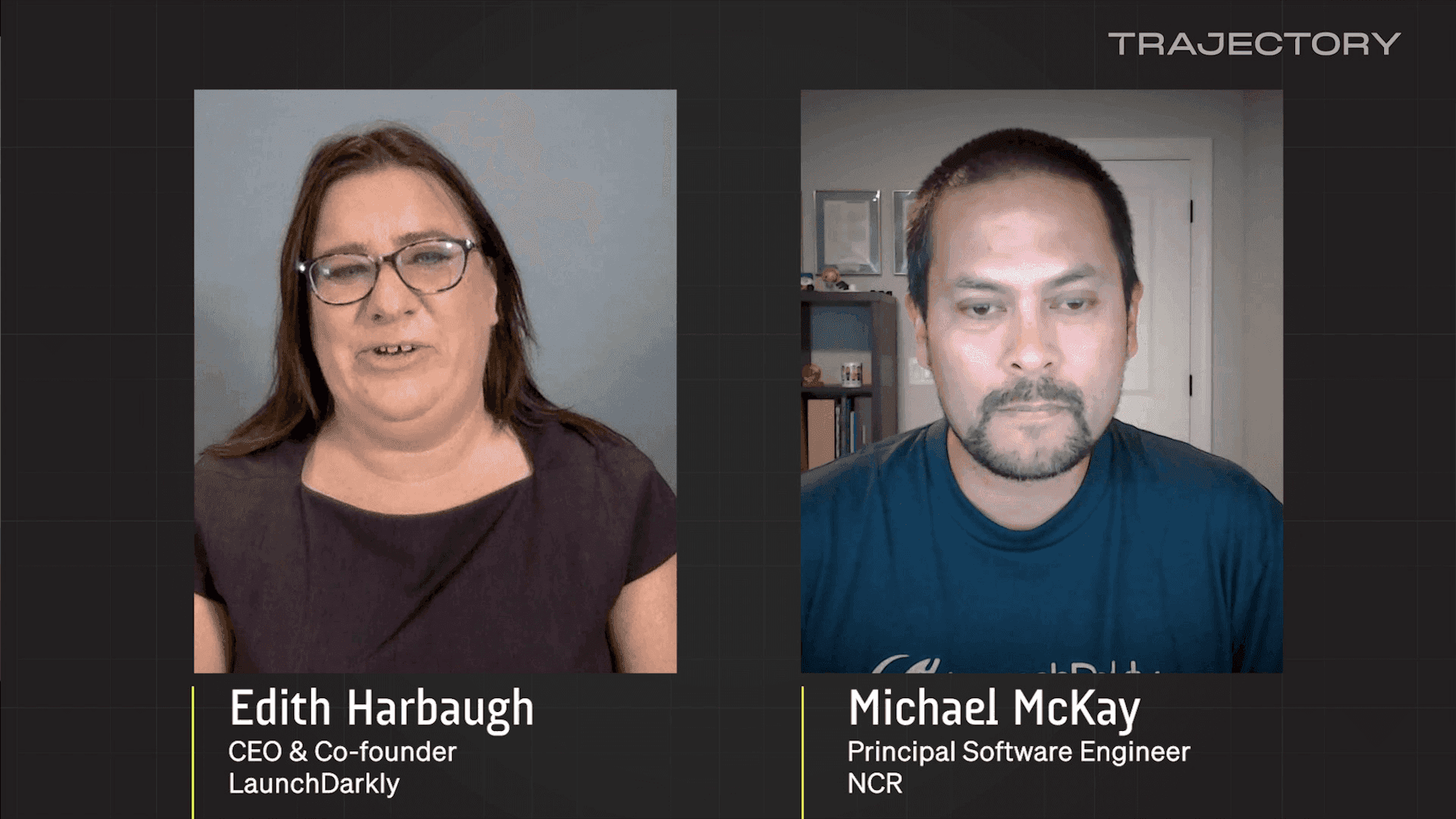

Fireside Chats: Edith Harbaugh & Michael McKay

Trajectory

Building the Circle of Faith: How Corporate Culture Builds T...

Trajectory

Flipr: Uber’s Dynamic Configuration Platform

Trajectory

Enterprise Empathy: Coping with Stress in Business Transform...

Trajectory

Ones and Zeros

Trajectory

Making Releases Boring in the Enterprise

Trajectory

Engineering Pathways for Systemic Change – What Happens When...

Trajectory

No Fear: Scaling the Xero Developer Platform

Trajectory

The Power of Feature Workflows

Trajectory

Champion versus Challenger

Trajectory

Launch Day Workflows

Trajectory

Understanding Feature Flag Performance with Observability

Trajectory

How Progressive Delivery Enables us to Chaos Engineering

Trajectory

How LaunchDarkly's systems break orbit

Trajectory

Shipping and Learning Fast via Feature Flags

Trajectory

.png)

How to Build Self Healing Systems and a Kill Switch. (Error ...

Trajectory

How Betway Tests in Production: Hypothesis Driven Developmen...

Trajectory

Service Protection at Scale with LaunchDarkly

Trajectory

Empowering Better Feature Management at Tray.io

Trajectory

Fast & Simple: Observing Code & Infra Deployments at Honeyco...

Trajectory

Always Be Testing: Test Strategy for Continuous Deployment

Trajectory

The Language of Liftoff

Trajectory

Several People are Launching: Feature Flags at Slack

Trajectory

Experiment Culture: From Tradition to Data-Driven

Trajectory

Tools for the Next Iteration of Modern Development

Trajectory

Not Just Buttons: Feature Flagging Your Machine Learning Arc...

Trajectory

Breaking Systems to Make Them Unbreakable

Trajectory

How Firefox Upholds its Values and Keeps Up With Change

Trajectory

Production as an Experiment Lab

Trajectory

Backyard Chat: Progressive Delivery

Trajectory

Opening Keynote: Product Vision

Trajectory

Conversation: Edith Harbaugh & LaunchDarkly Customer Panel

Trajectory

Opening Keynote: Empowering All Teams to Deliver & Control S...

Trajectory

Keynote: Measuring Your Trajectory

Trajectory

Scaling Salads: How We Launched One of Our Most Impactful Te...

Trajectory