Fast & Simple: Observing Code & Infra Deployments at Honeycomb

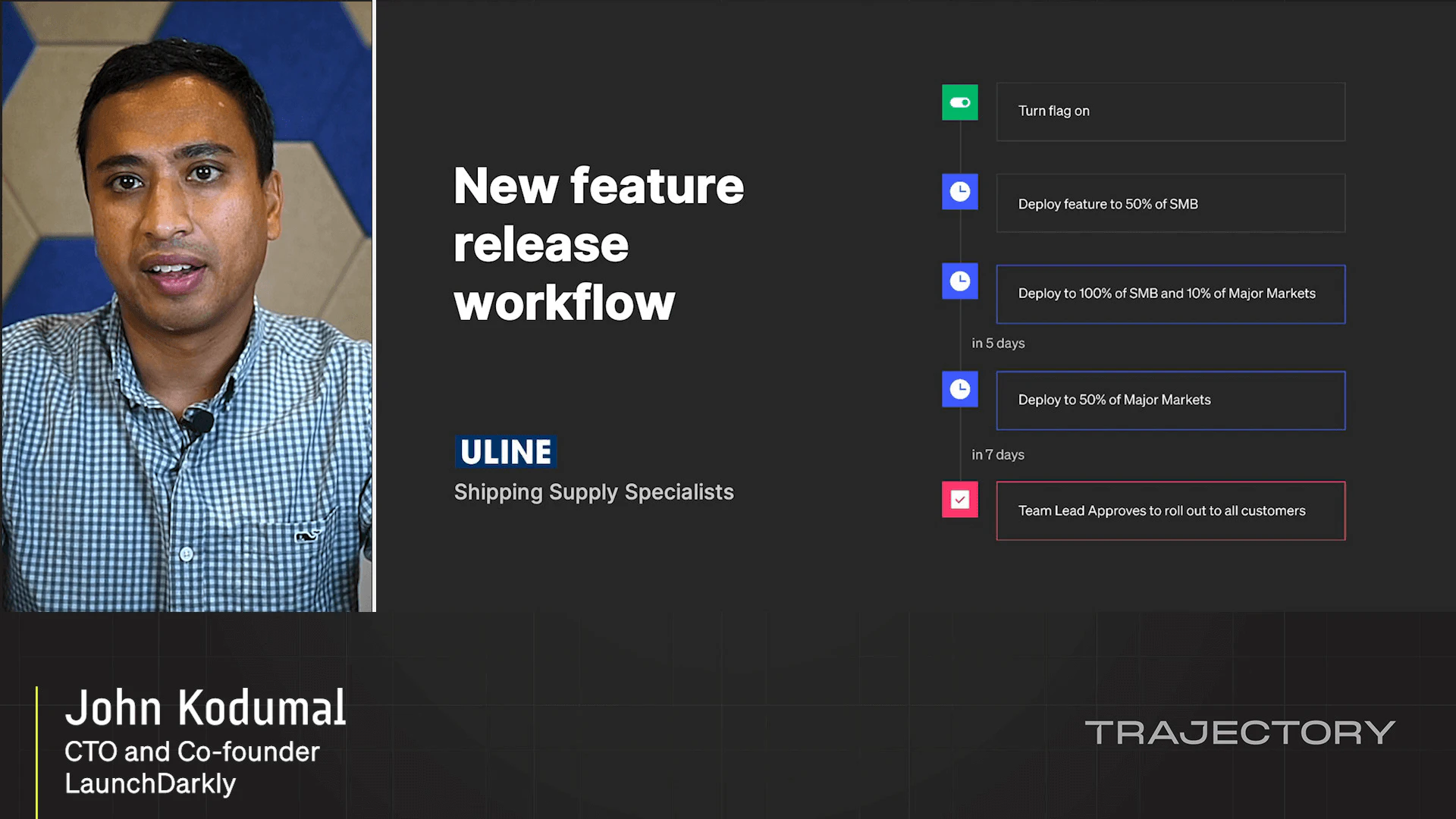

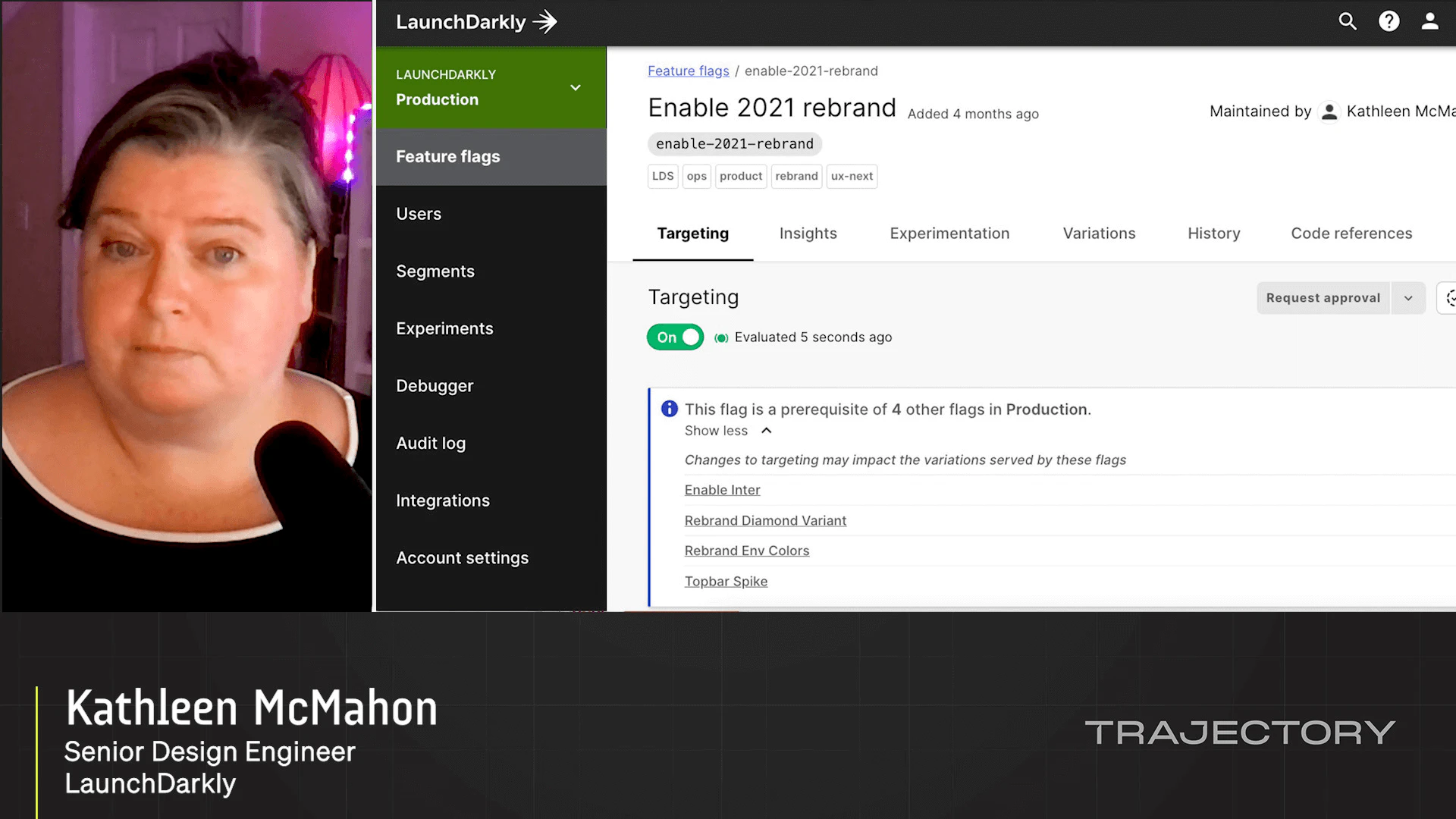

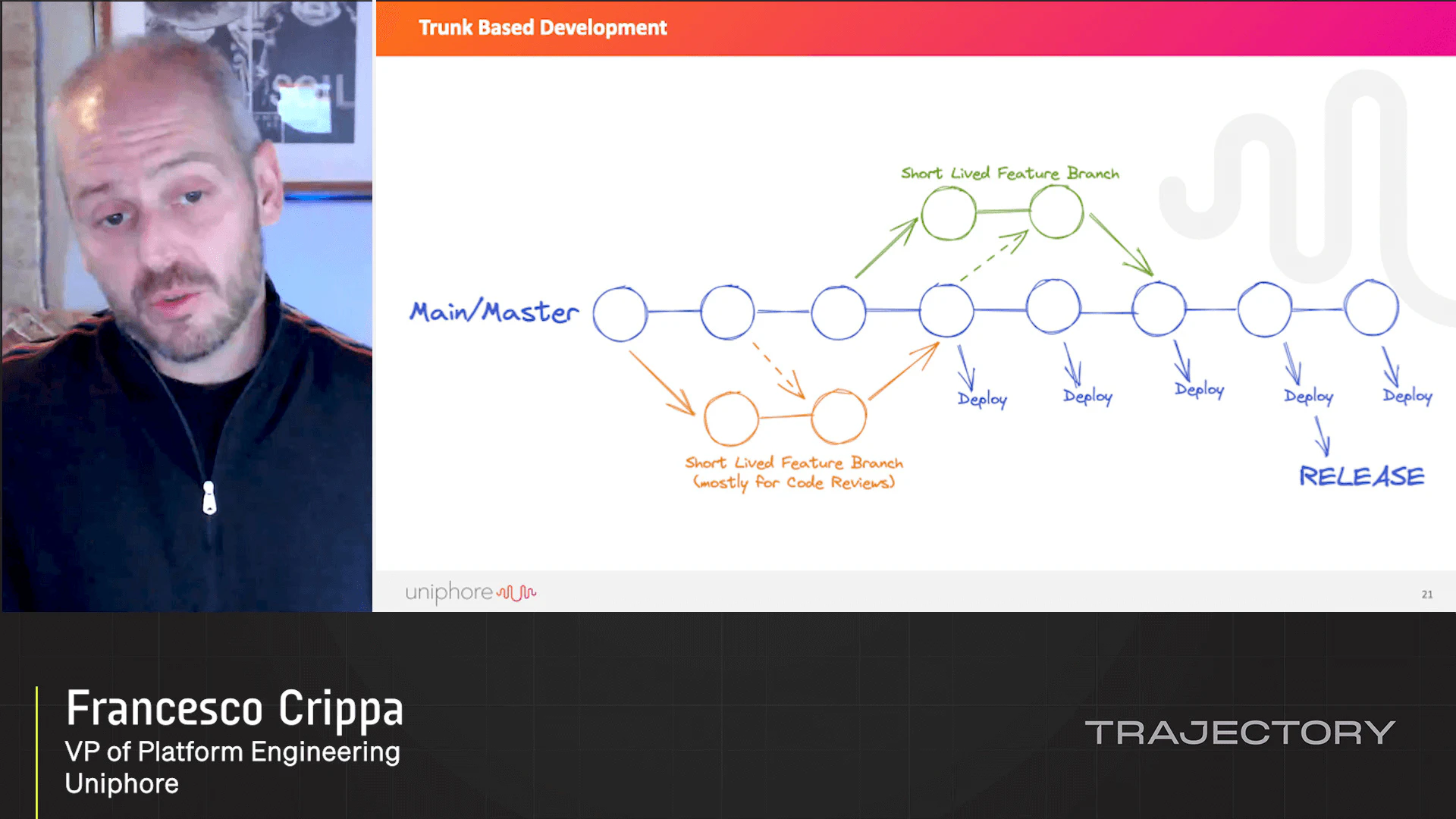

In this talk, you'll learn about the architecture and operational practices used by an engineering team of less than a dozen people to run a real-time event analytics platform that persists billions of events per day, with search over the telemetry performed near-real time. Liz will cover Honeycomb's use of Apache Kafka, Terraform, LaunchDarkly, Chef, EC2 auto-scaling, Lambda, S3, and how they use boring VMs rather than Kubernetes for their main serving pipeline. You'll learn how streaming data solutions can work at less than Facebook scale, and with few engineers. Yes, even your frontend-y full stack devs can be on call; they just need the right tooling and help. Design systems to be robust with degrees of redundancy. And check them!

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.