How to Build Self Healing Systems and a Kill Switch. (Error Monitoring at Its Best)

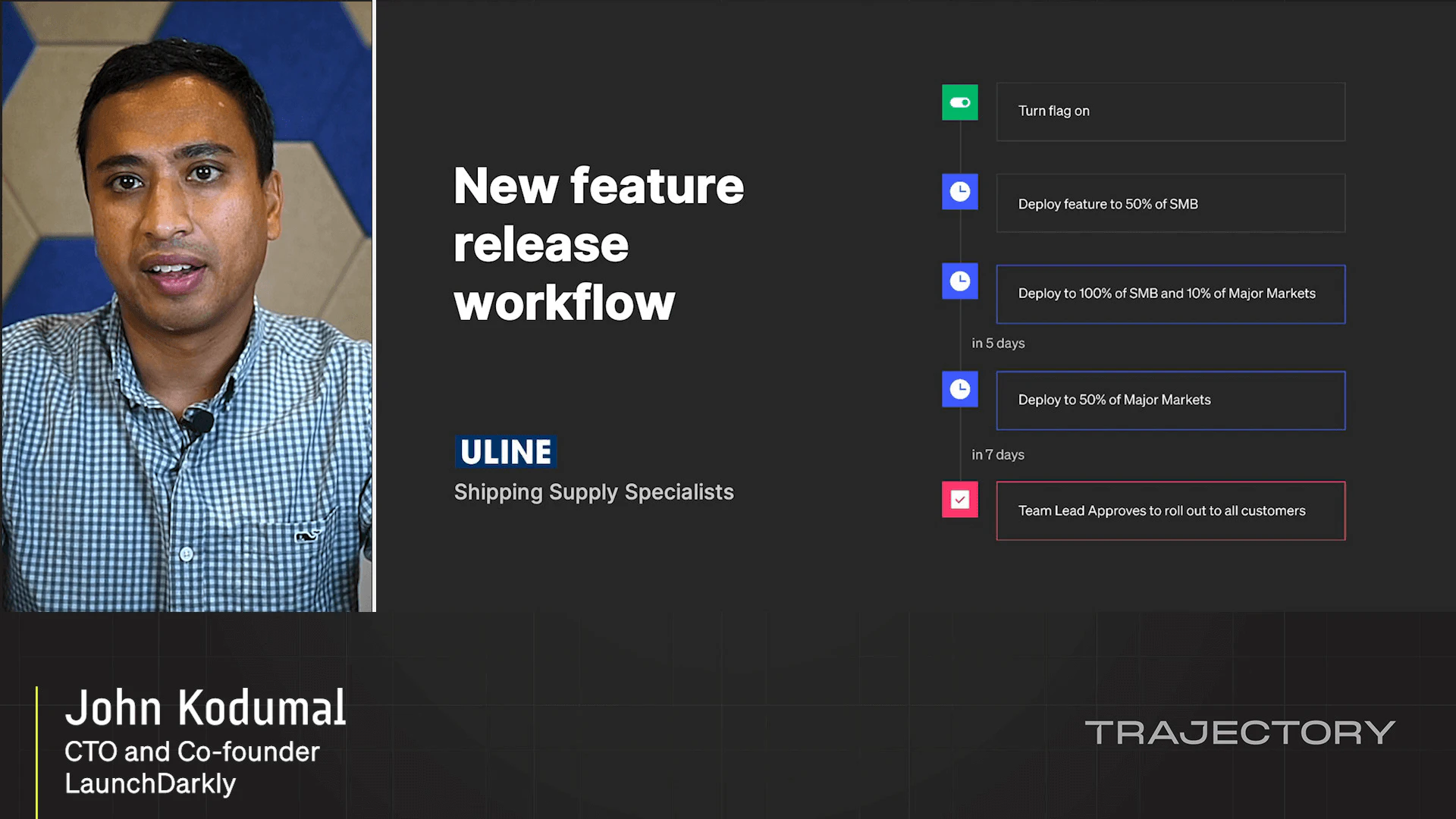

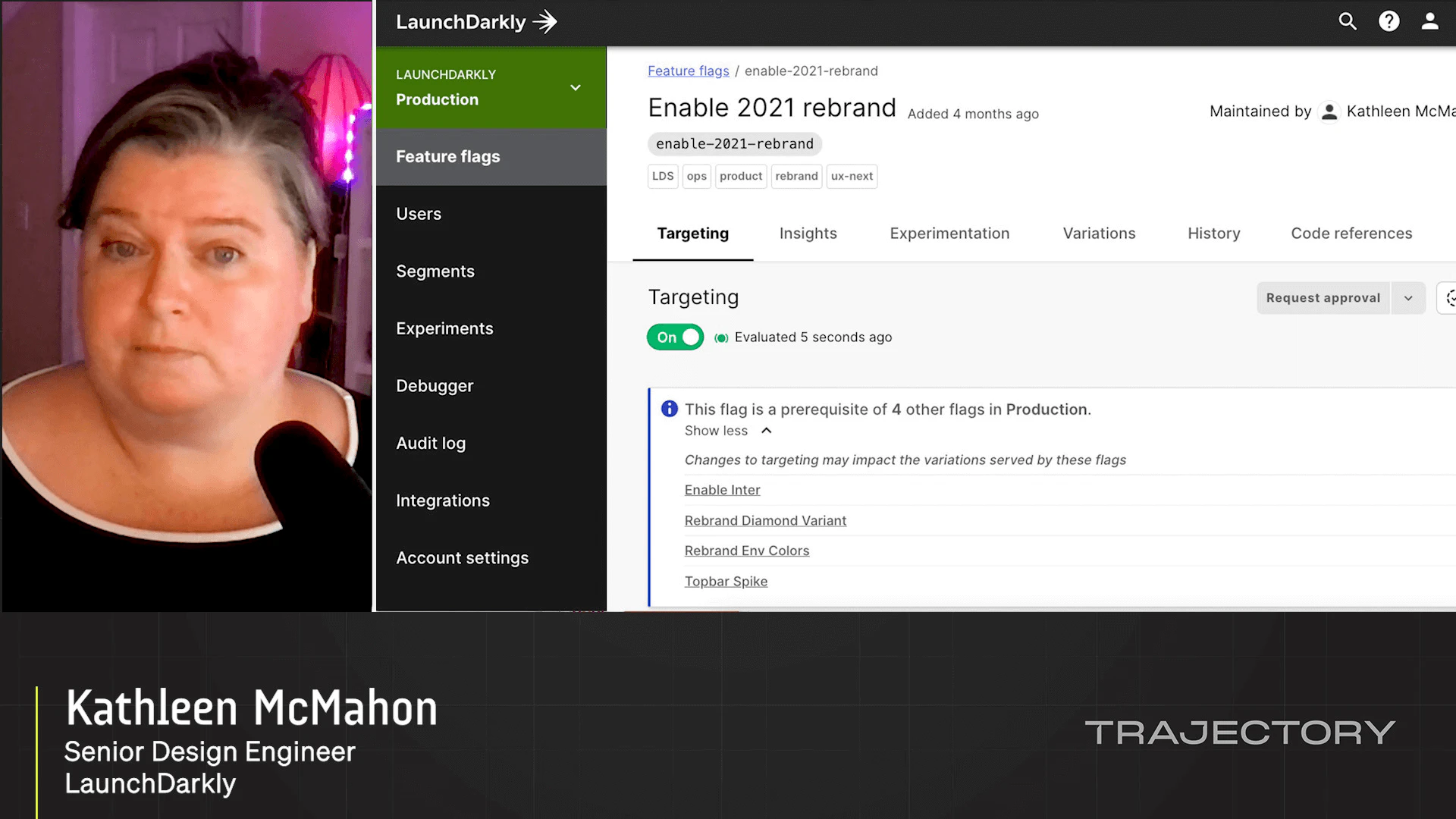

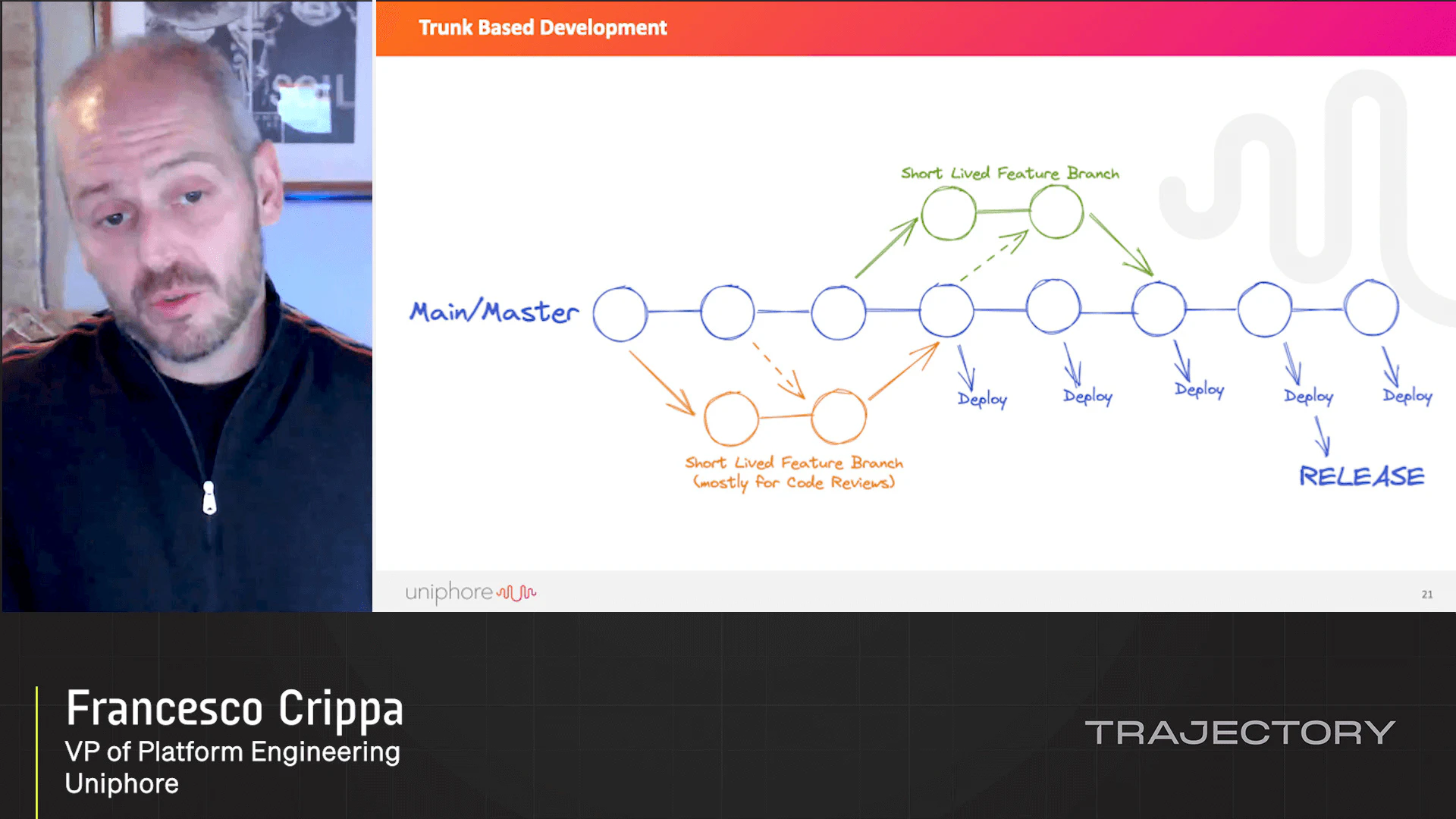

On-call schedules, constant reorganizations, fast turnover, impossible deadlines, new platform and new frameworks every day… Building modern platforms is becoming unsustainable for the new generation of engineers. In these challenging scenarios, autonomous systems offer a new hope. Not requiring human intervention, these systems offer a more manageable and sustainable approach. Cloud computing, standardized APIs, common practices, and shared tools are opening the door to building self-healing systems – systems that can react quickly and automatically to the increase in load, recover from unexpected errors even before users can notice it, and run rollbacks and migrations without any human interactions. While all of this is technically possible, specific practices (feature flagging and error monitoring) and strong discipline are needed to build such systems. And above all, a new engineering culture needs to be defined to make the dream reality.

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.