Service Protection at Scale with LaunchDarkly

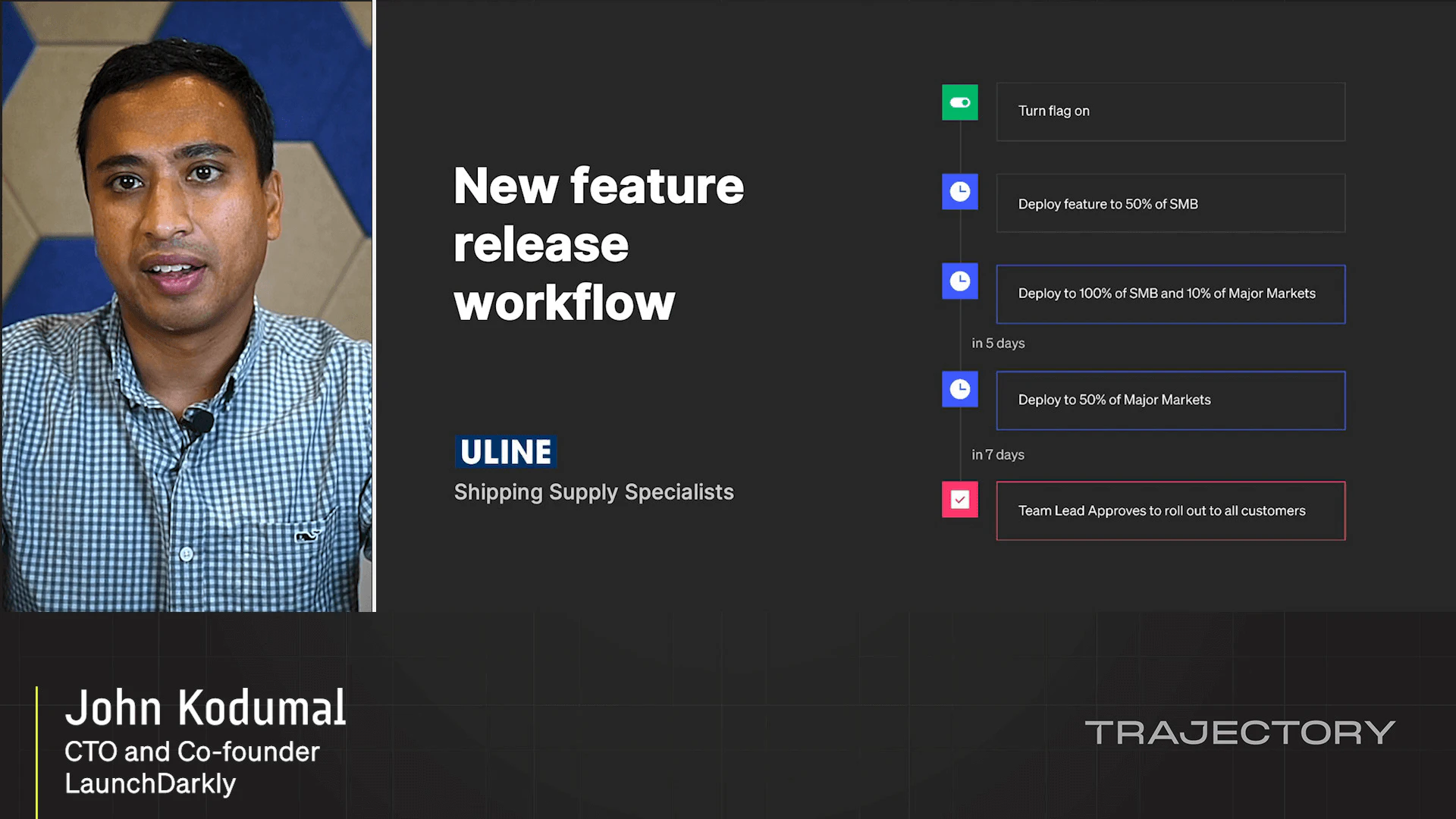

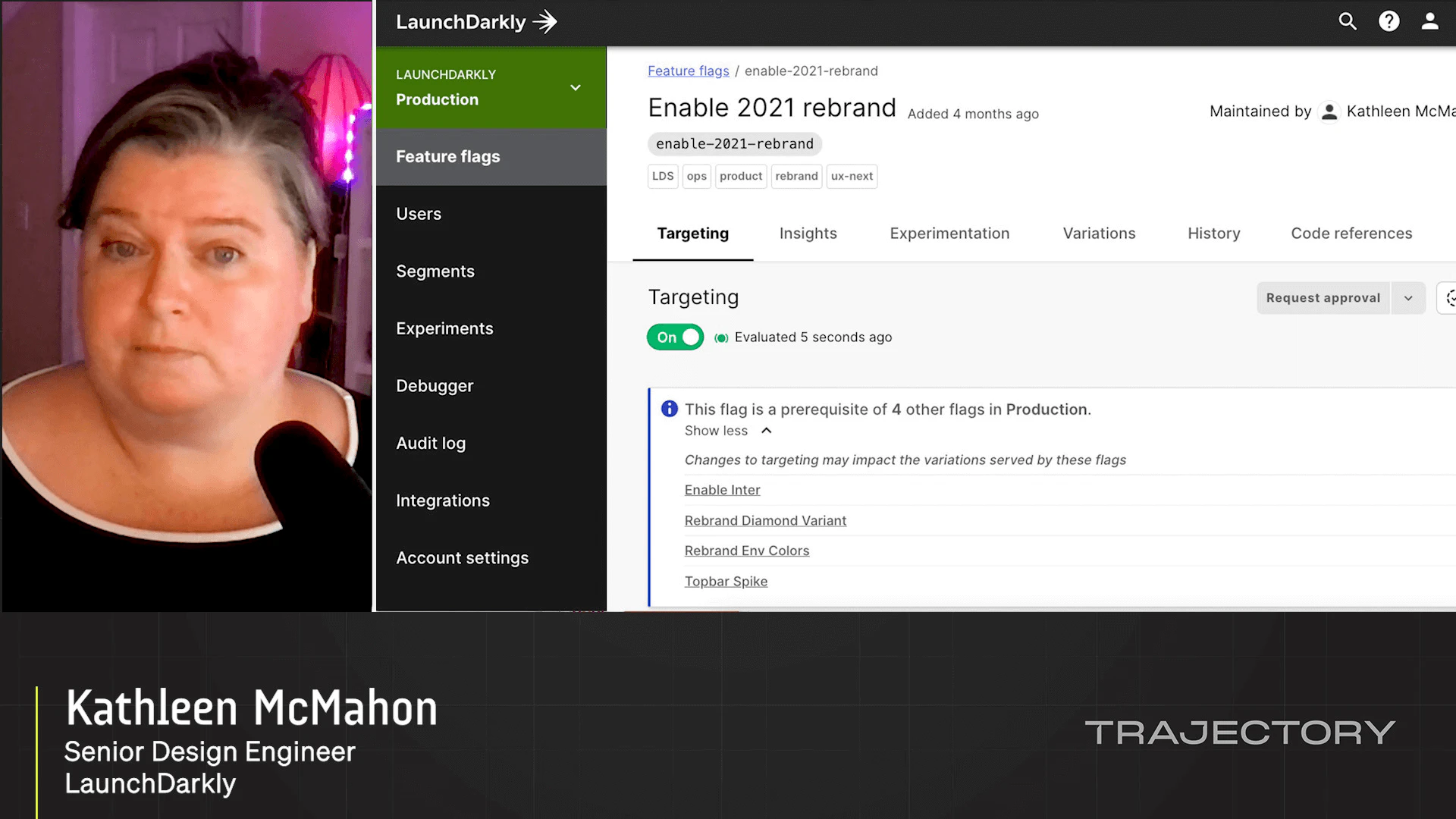

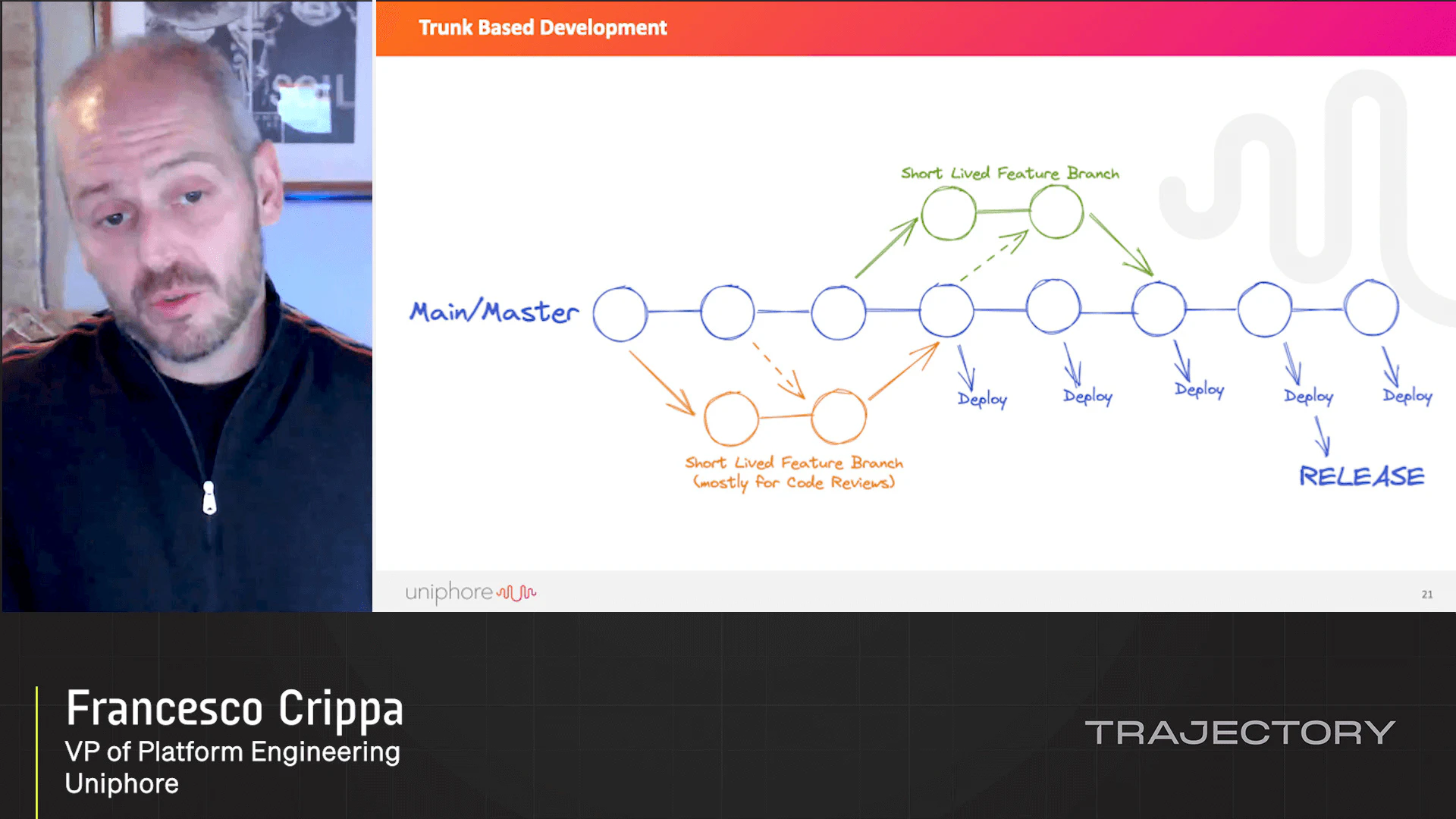

Service uptime is critical for maintaining customer trust. Protection mechanisms such as rate limiting help in maintaining uptime. LaunchDarkly can be used as an operational dashboard, with powerful tools to prevent downtime before it happens and resolve incidents faster when it does.

Loading...

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.