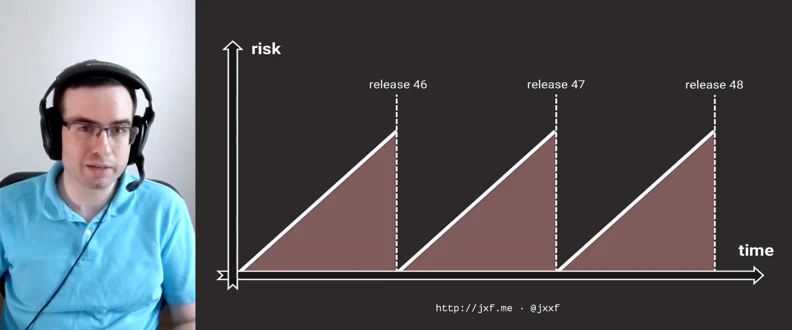

Making Releases Boring in the Enterprise

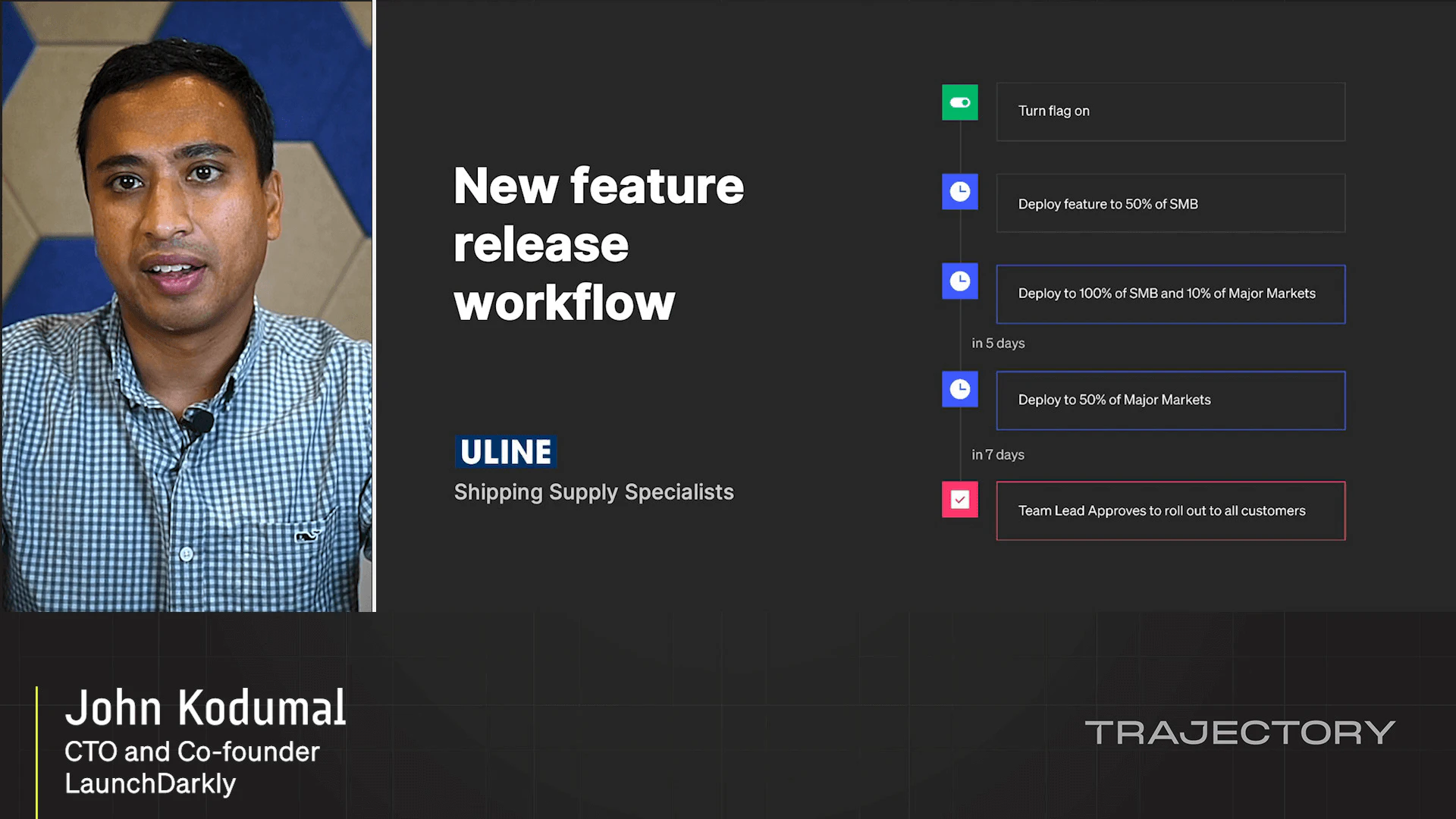

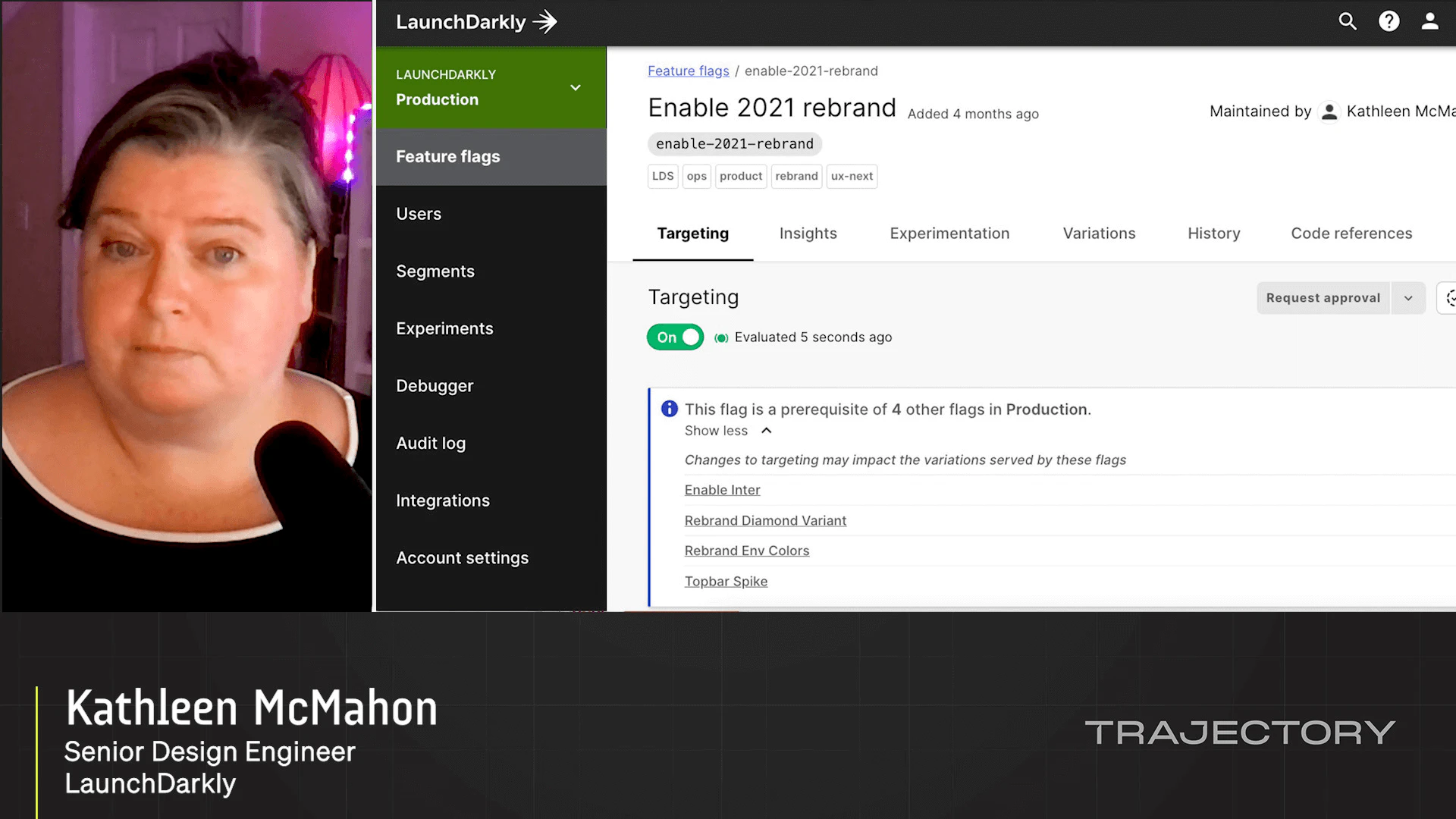

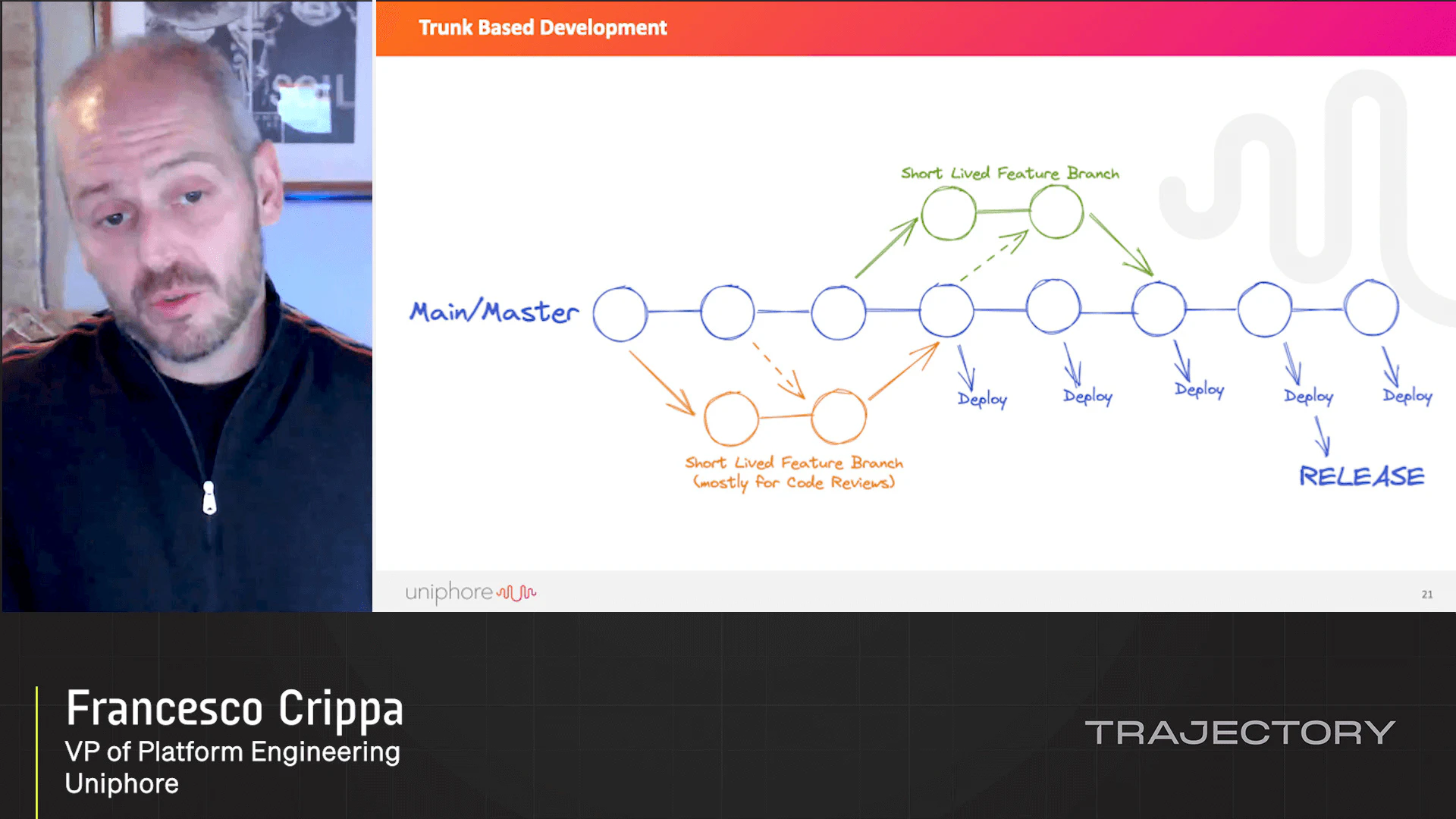

Enterprises often suffer from a curse of accumulated risk. The applications they're building are large and complex, and thus need a lot of risk management process, which means releases take longer— which makes them riskier, and we're back where we started. What can we do to break out of this deadly, self-reinforcing feedback loop? In this talk, John will explore how combining key ideas from Progressive Delivery and observability lead to better, more resilient releases for teams of all sizes. In particular, John will cover how combining intelligent traffic shadowing with metrics-canary phased rollouts can get you back to a happier place for releasing your software. Releases should be boring—let's make them stay that way.

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.