Shipping and Learning Fast via Feature Flags

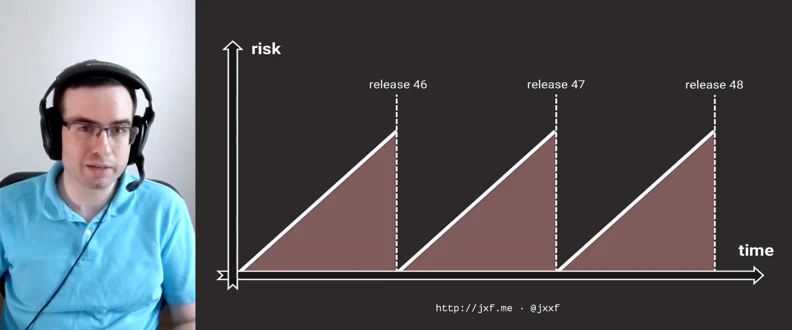

Feature flags are a great way to enhance the speed with which you can collaborate with colleagues, develop in production, and also learn from real-world usage. Using feature flags to enable things selectively for users is an established pattern. Now, take it a step further and use them to enable fast iterative shipping for your development team. I’ll talk about how we’ve unlocked the ability to use production to safeguard production at GitHub - as counter intuitive as that might sound!

Loading...

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.