Keynote: Measuring Your Trajectory

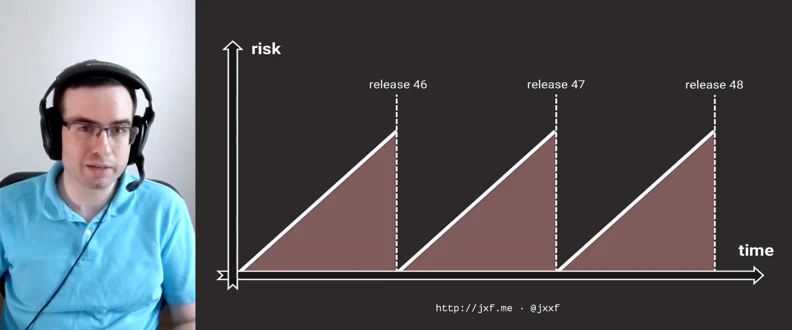

As the pace of technology increases there is a strategic pressure to be more innovative more quickly. However, many established enterprises find it hard to "get out of their own way" and unlock the innovation that is already present within their organizations. What are the most fundamental metrics that support innovation? We’ll look at how a short time-to-value drives many other desirable outcomes, making change safer, more incremental and less wasteful. The sheer number of changes requires new tooling for automation and management of features and hypothesis tests at scale.

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.