Always Be Testing: Test Strategy for Continuous Deployment

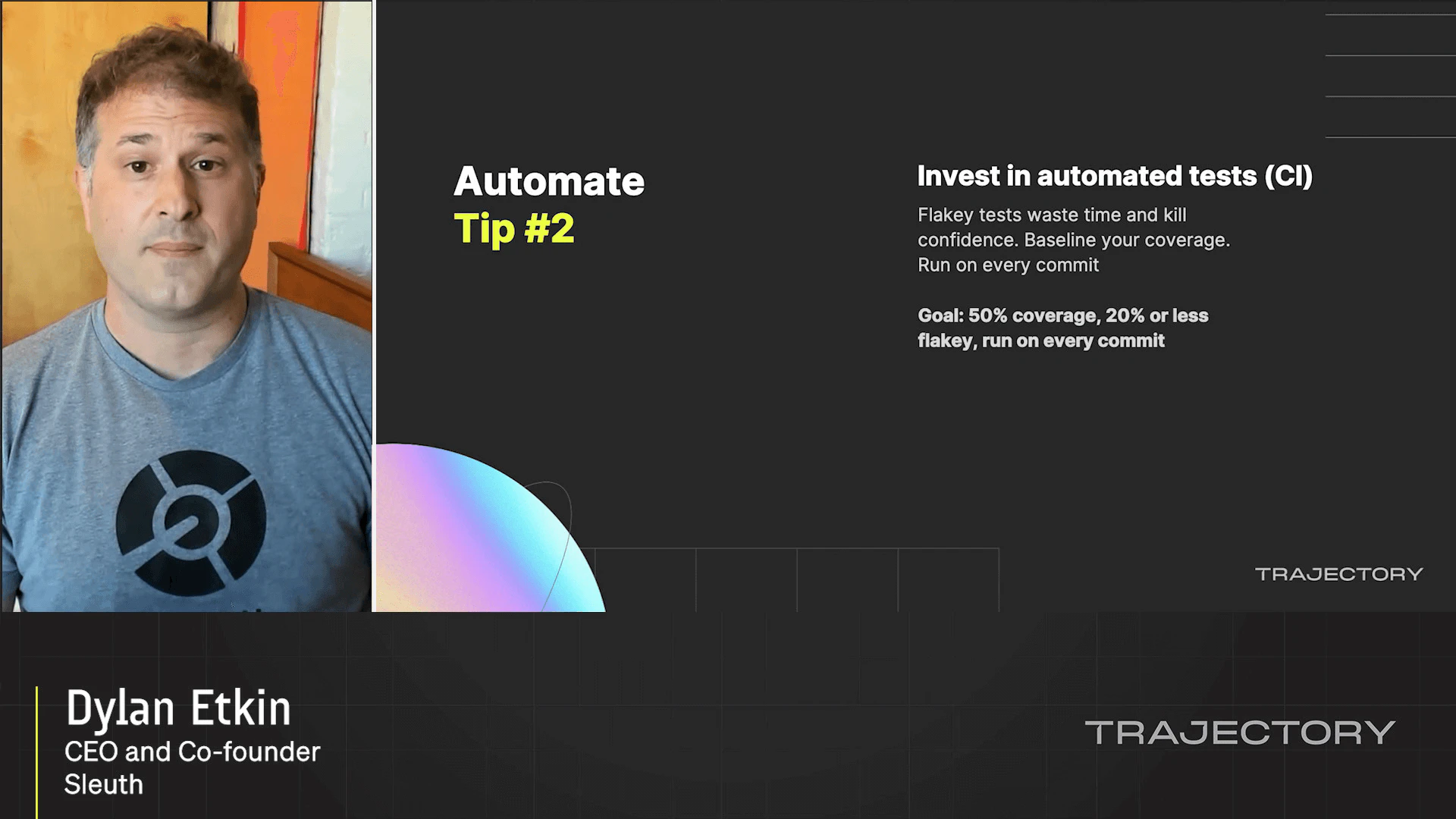

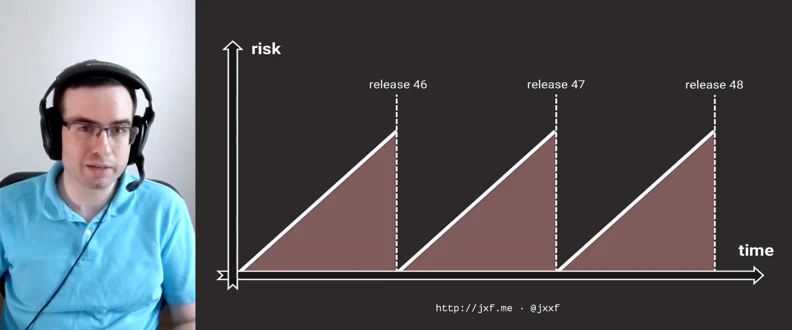

The principles that allow for Continuous Deployment have been shown to increase product speed and quality. According to DevOps Research and Assessment's State of DevOps Report, high quality, shipping frequency, and mean time to recover (MTTR) are correlated. Those who use Continuous Deployment not only ship frequently but have higher overall software quality and fix bugs faster. One aspect of a good Continuous Deployment pipeline is testing. We want to test all branches of the code, we want to test in production, and we want to test our products efficiently.

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.