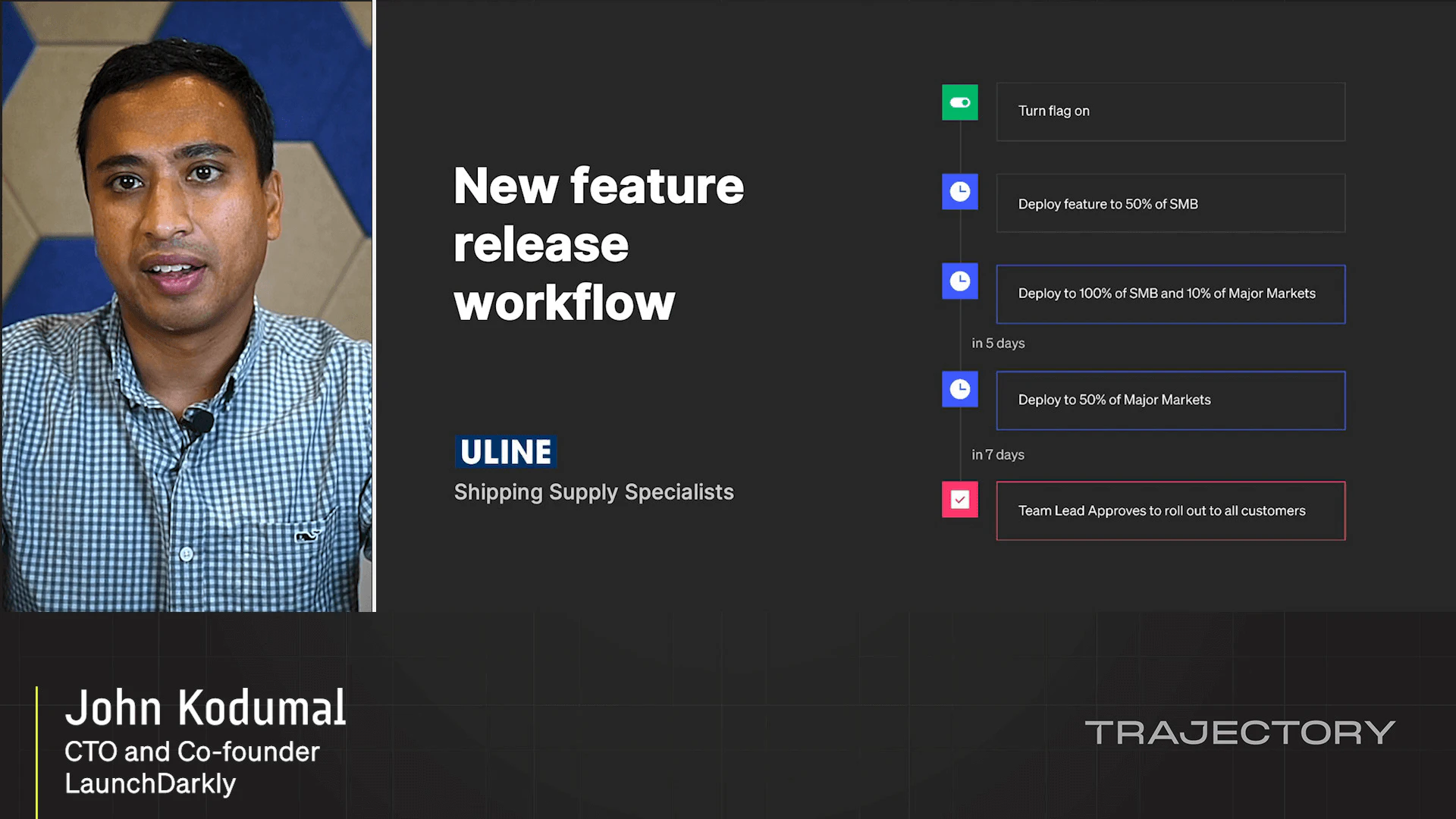

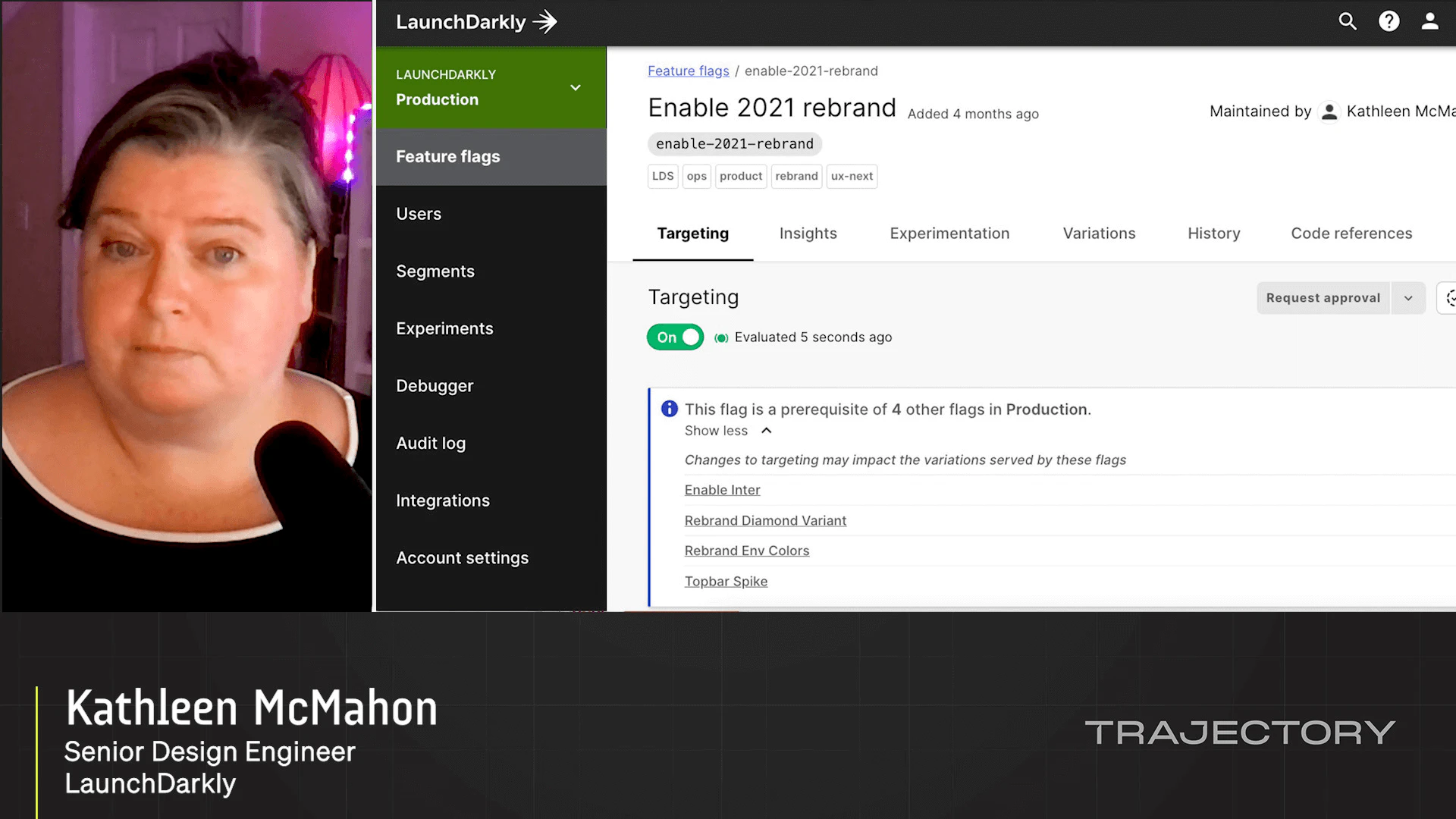

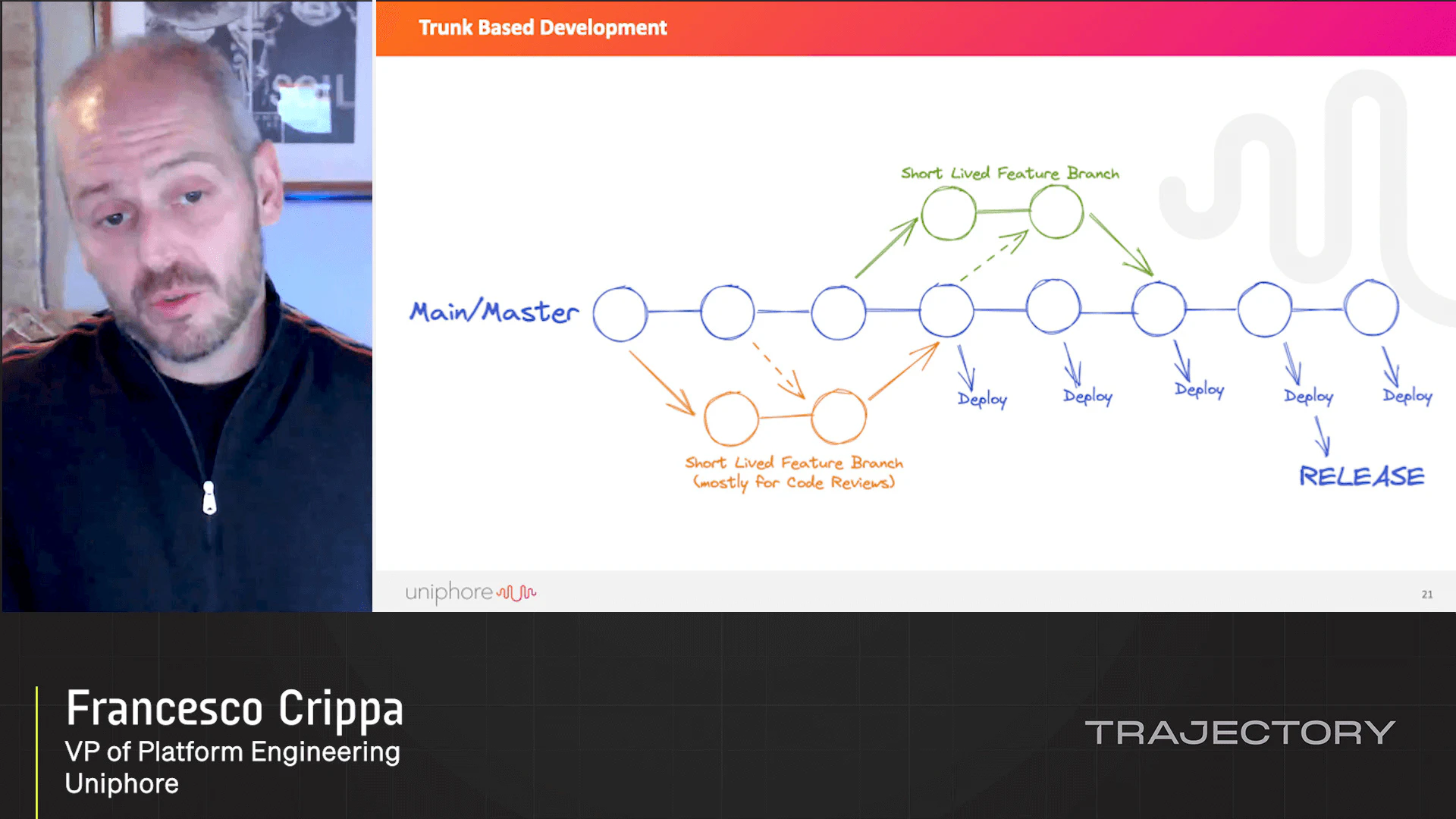

Launch Day Workflows

What’s your Launch Day Workflow? Have you automated anything to improve that workflow? Do you use any LaunchDarkly integrations or have you built any yourself? This session will dive deep into what happens before and after you flip that toggle. We’ll explore integrations we’ve built to help you keep track of that feature once it’s out the door. And we’ll also discuss workflows and integrations that we’re working on to improve the quality, accountability, and compliance of rolling out features.

Loading...

Latest Videos

Sign up for our newsletter

Get tips and best practices on feature management, developing great AI apps, running smart experiments, and more.